Agent Builder is available now GA. Get started with an Elastic Cloud Trial, and check out the documentation for Agent Builder here.

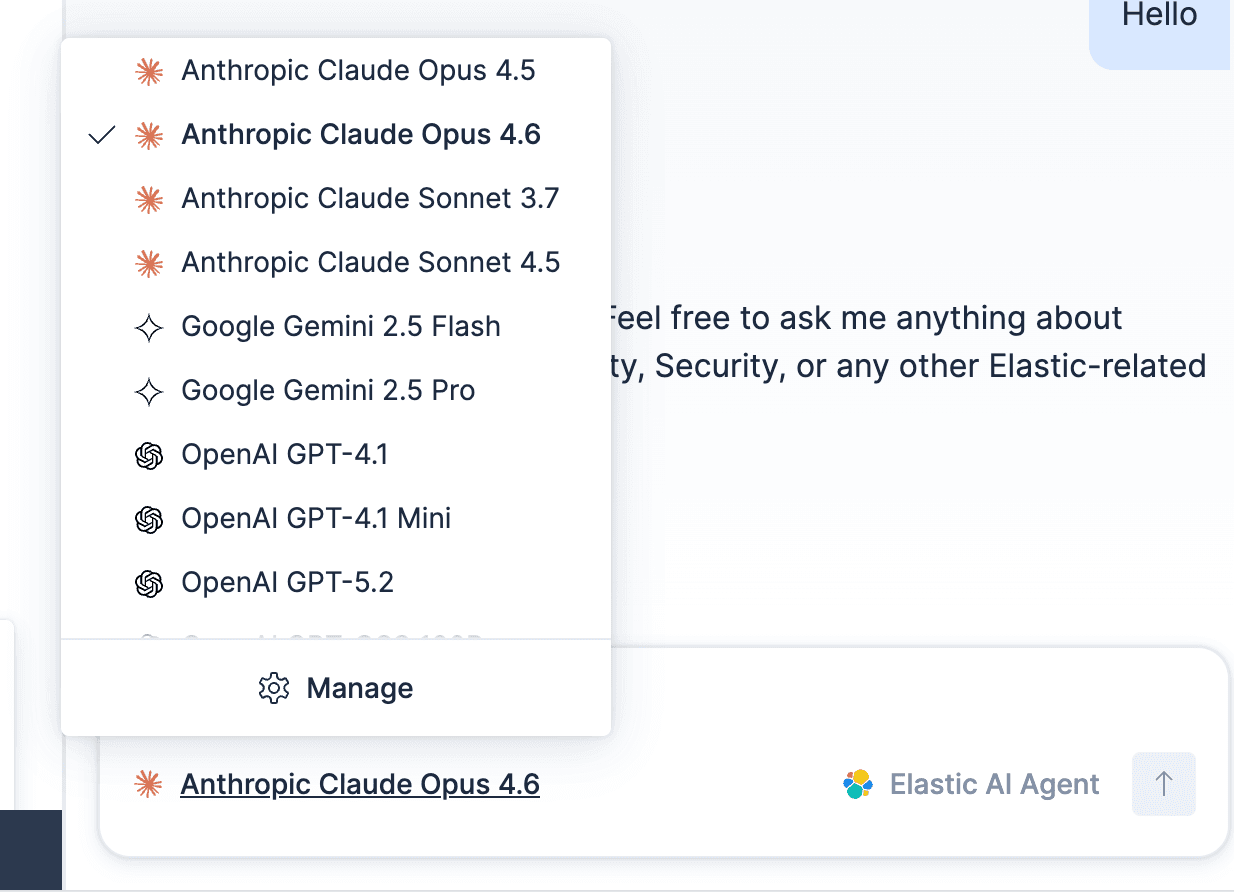

Today, we’re pleased to announce an expanded model catalog for Elastic Inference Service (EIS), making it easy to run fast, high-quality inference on managed GPUs, without setup or hosting complexity.

EIS already provides access to state-of-the-art large language models (LLMs) that power out-of-the-box AI capabilities across Elastic Agent Builder and Elastic AI Assistants, including automatic ingest, threat detection, problem investigation, and root cause analysis. We’re now extending this foundation with a broader catalog of managed models, giving developers more control over how agents reason, retrieve, and act.

In practice, this reflects a broader shift in how enterprises build AI systems. The idea of a single, all-purpose AI model no longer holds up. Real-world agent workflows require multiple models with different strengths, costs, and performance characteristics. With EIS, teams can either choose and switch models directly in Agent Builder, with zero setup, cost, or hosting overhead, or they can mix and match models in an agent workflow so each step uses the model best suited to the task.

Developers can use models from OpenAI, Anthropic, and Google directly in Elasticsearch, selecting different models for different agent steps while Elastic fully manages inference, scaling, and GPU execution for production agents.

An expanded catalog of managed models on EIS

The expanded EIS catalog now includes models optimized for different classes of tasks, from lightweight generation to large-context reasoning and embeddings for retrieval.

For generation, the catalog includes:

- Anthropic Claude Opus 4.5 and 4.6.

- Gemini 2.5 Flash.

- Gemini 2.5 Pro.

- OpenAI GPT-4.1 and GPT-4.1 Mini.

- OpenAI GPT-5.2.

- OpenAI GPT-OSS-120B.

For retrieval, EIS includes native Jina AI models, jina-embeddings-v3 and jina-embeddings-v5, which provide fast, high-quality embeddings for multilingual retrieval. The service also includes embedding models from Microsoft, OpenAI, Google, and Alibaba.

Choosing the right models for agent tasks

With EIS, model choice becomes a design decision inside the agent, rather than an operational concern. Agents can select models based on the role they play, without changing how inference is deployed or scaled.

To see how this plays out in practice, consider a few common agent scenarios.

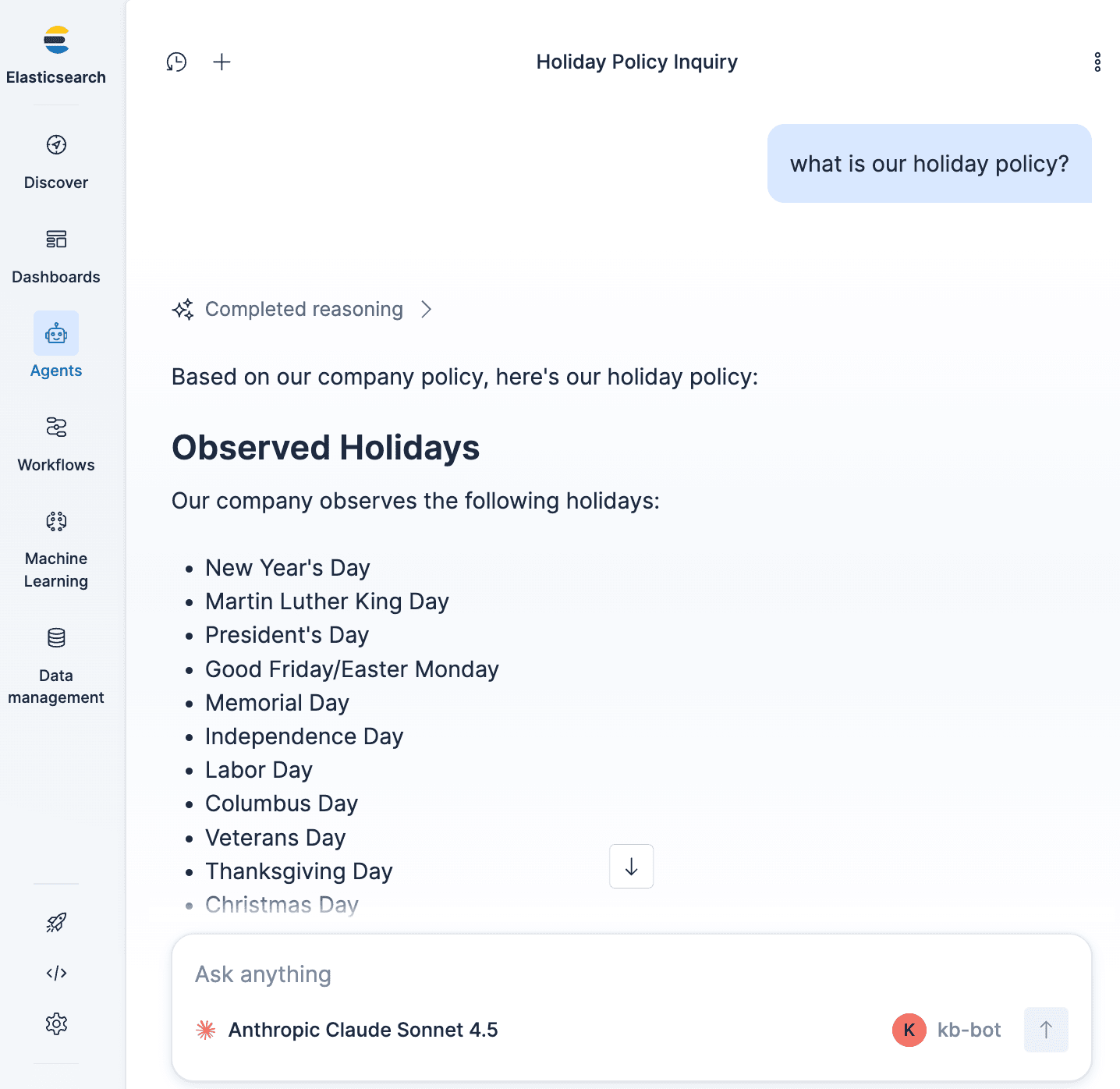

Simple informational query

Simple interactions, such as answering “What is our holiday policy?,” do not require an expensive frontier model and can be handled by a fast, low-cost option.

- Task: “What is our holiday policy?”

- Pattern: Retrieve and summarize.

- Model choice: Fast, low-cost generation model.

This can also be configured through the API by selecting the model you want to use:

This step relies primarily on retrieval quality. A lightweight model is sufficient to summarize a small set of documents quickly.

Moderate capability

More complex tasks may benefit from a more capable generation model, without necessarily requiring the most expensive reasoning model available.

- Task: “Compare our holiday policy with new labor laws in France and draft an email.”

- Pattern: Retrieve relevant documents, compare policy details across sources, and generate output such as a draft email.

- Model choice: More capable generation model.

Here’s the API example:

This task requires synthesis across multiple sources and structured output but doesn’t need the heaviest frontier reasoning model.

Investigation or audit task (high capability)

- Task: Review a large document set to identify compliance risks.

- Pattern: Multistep reasoning over large context, where the model evaluates information across many documents and synthesizes findings before producing a final judgment.

- Model choice: Frontier or large-context model.

Try it out using the API:

Because the task requires deeper reasoning and consistent evaluation across many inputs, output quality matters more. A high-capability model is therefore appropriate for this step.

EIS also enables more advanced orchestration patterns. Enterprises increasingly recognize that using a frontier model for every agent step is inefficient.

With Agent Builder and Elastic Workflows, teams can design agents where each subtask is executed by the most efficient model for the job, based on cost, complexity, and accuracy requirements.

Models-as-judge pattern (quality control)

- Task: Validate an agent’s output using a second model

- Pattern: Generate and evaluate.

In this Elastic Workflow example, the agent uses one model to generate a response and a second model to evaluate its quality, adding a validation layer for the result. Elastic Workflows, the automation engine built into Elasticsearch, let developers combine reliable scripted automation with AI-driven steps for tasks that require reasoning.

The multimodel approach enables new reliability patterns by separating generation from evaluation, allowing one model to produce a response and another to validate it. Today, teams can implement this by pairing a general-purpose generation model with a lighter-weight evaluation model.

Over time, this pattern naturally lends itself to specialized judging and safeguard models designed specifically for validation, policy checks, and quality control. As these models become available, EIS makes it straightforward to introduce them into agent workflows without changing how inference is deployed or managed.

What’s next

EIS is actively evolving, with more models on the way. You can track what’s coming next and what we’re currently building on the Elastic public roadmap.

Get started

Elastic Inference Service makes it easy to start with default models and evolve toward sophisticated, multimodel agent workflows over time, all within Elasticsearch. Whether you’re building global retrieval augmented generation (RAG) systems, search, or agentic workflows that need reliable context, Elastic now gives you high-performance models out of the box, along with the operational simplicity to move from prototype to production with confidence.

All Elastic Cloud trials have access to Elastic Inference Service. Try it now on Elastic Cloud Serverless or Elastic Cloud Hosted, or use EIS via Cloud Connect with your self-managed cluster.