CrowdStrike Integration

| Version | 3.21.0 (View all) |

| Subscription level What's this? |

Basic |

| Developed by What's this? |

Elastic |

| Ingestion method(s) | API, AWS S3, File |

| Minimum Kibana version(s) | 9.0.0 8.19.0 |

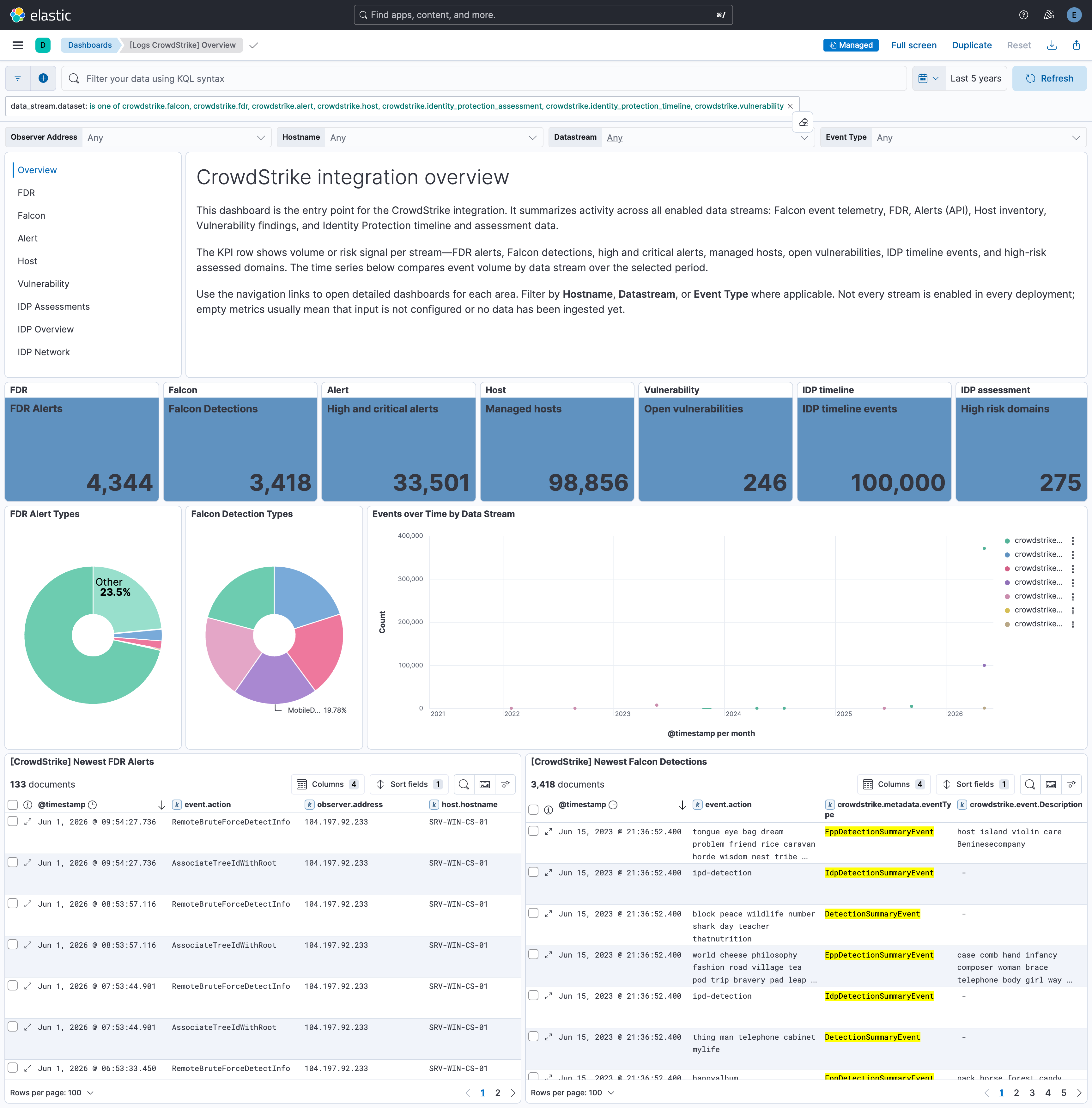

The CrowdStrike integration allows you to efficiently connect your CrowdStrike Falcon platform to Elastic for seamless onboarding of alerts and telemetry from CrowdStrike Falcon and Falcon Data Replicator, including Identity Protection data collected over GraphQL. Elastic Security can leverage this data for security analytics including correlation, visualization, and incident response.

For a demo, refer to the following video (click to view).

This integration is compatible with CrowdStrike Falcon SIEM Connector v2.0, REST API, and CrowdStrike Event Streams API.

The integration collects data from multiple sources within CrowdStrike Falcon and ingests it into Elasticsearch for security analysis and visualization:

CrowdStrike Event Streams — Real-time security events (auth, CSPM, firewall, user activity, XDR, detections). You can collect this data in two ways:

- Falcon SIEM Connector — A pre-built integration that connects CrowdStrike Falcon with your SIEM. The connector collects event stream data and writes it to files; this integration reads from the connector's output path (the

output_pathincs.falconhoseclient.cfg). - Event Streams API — Continuously streams security logs from CrowdStrike Falcon for proactive monitoring and threat detection.

Data from either method is indexed into the

falcondataset in Elasticsearch.- Falcon SIEM Connector — A pre-built integration that connects CrowdStrike Falcon with your SIEM. The connector collects event stream data and writes it to files; this integration reads from the connector's output path (the

CrowdStrike REST API — Pulls alerts, host inventory, and vulnerability data (indexed into the

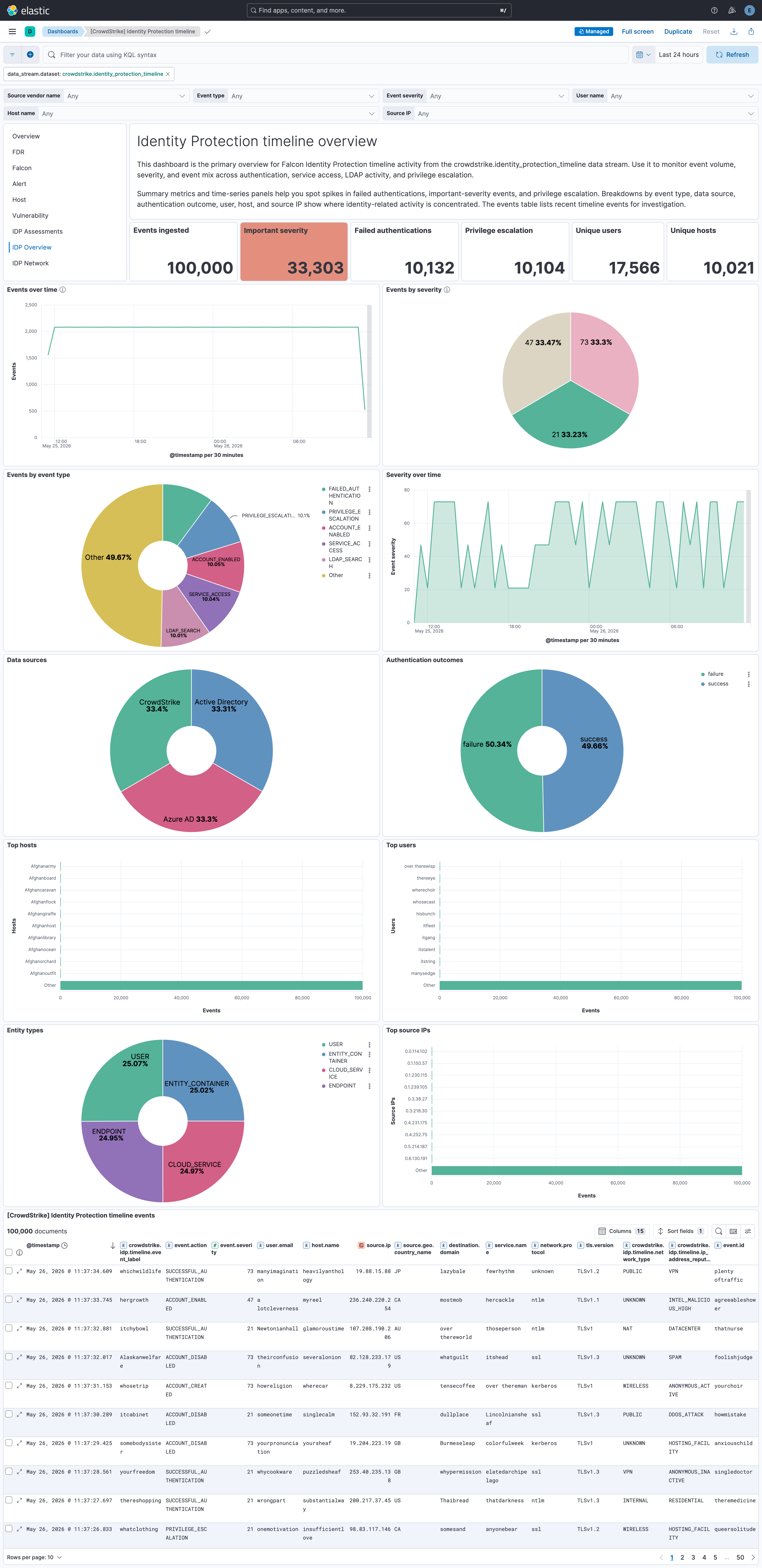

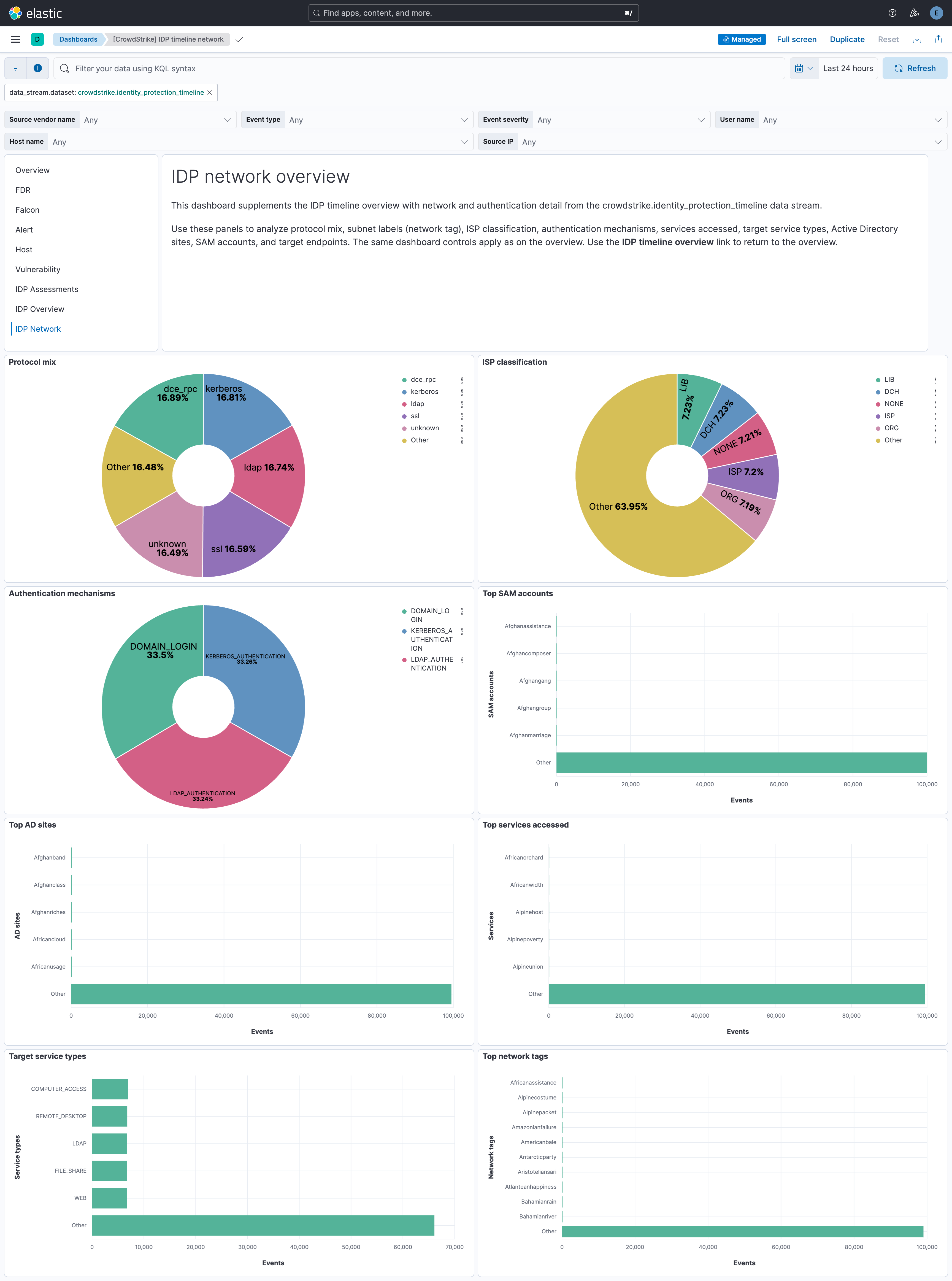

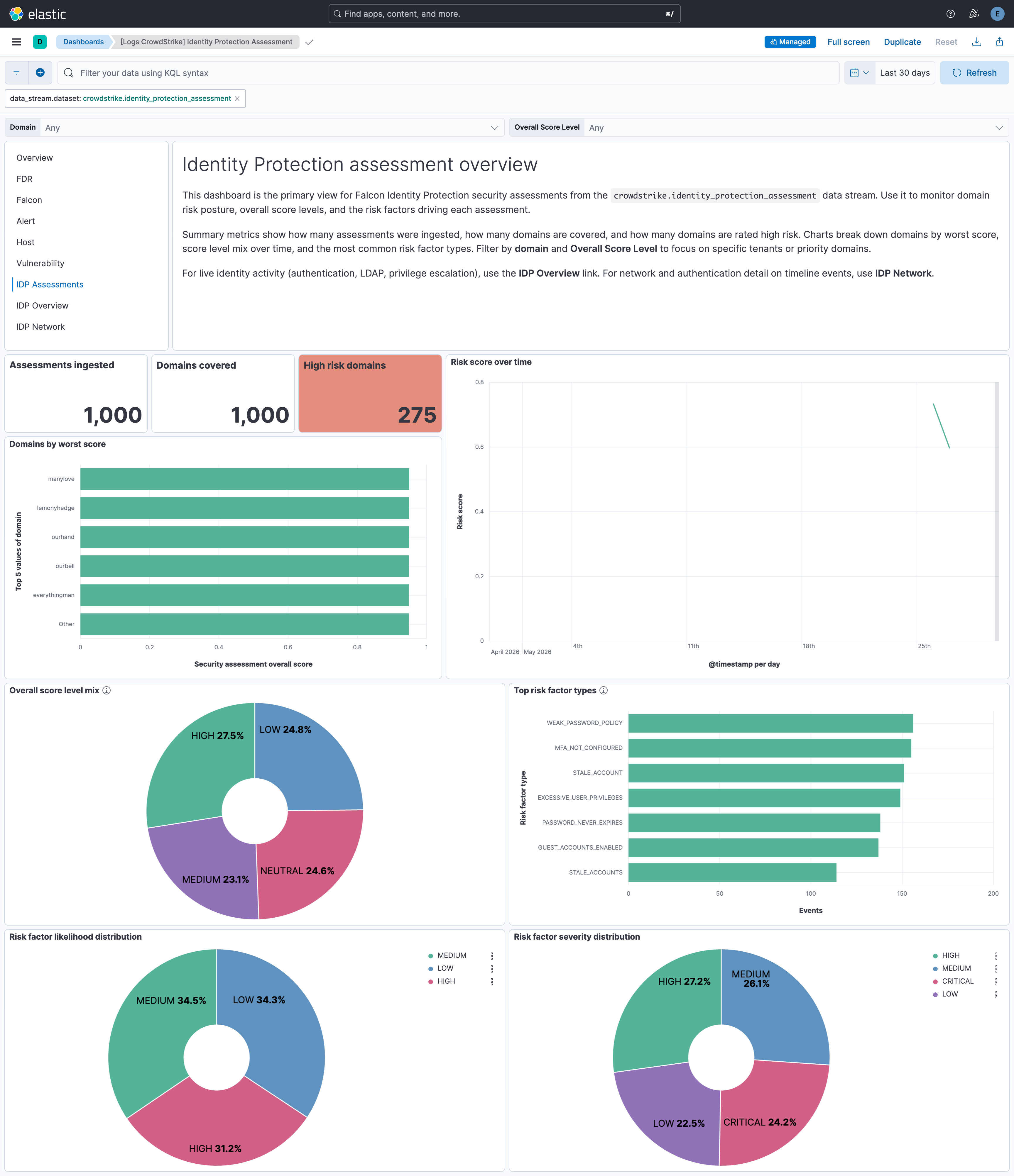

alert,host, andvulnerabilitydatasets).Identity Protection (GraphQL) — Additional datasets use the CrowdStrike Identity Protection GraphQL API (

/identity-protection/combined/graphql/v1).- Security assessments (

identity_protection_assessment) — Discovers domains, then ingests per-domain security assessment results. - Timeline (

identity_protection_timeline) — Collects identity protection timeline events including authentication, service access, LDAP search, account lifecycle, and privilege escalation activity.

NoteGovCloud CID users must enable the GovCloud option in the integration configuration to query the

/devices/queries/devices/v1endpoint instead of the unsupported/devices/combined/devices/v1endpoint.- Security assessments (

Falcon Data Replicator (FDR) — Batch data from your endpoints, cloud workloads, and identities using the Falcon platform's lightweight agent. Data is written to CrowdStrike-managed S3; this integration consumes it using SQS notifications (or from your own S3 bucket if you use the FDR tool to replicate). Logs are indexed into the

fdrdataset in Elasticsearch.

- Event Streams (falcon dataset)

- FDR (fdr dataset)

- Alerts (alert dataset)

- Hosts (host dataset)

- Vulnerability (vulnerability dataset)

- Identity Protection (GraphQL):

- Security assessments (identity_protection_assessment dataset)

- Timeline (identity_protection_timeline dataset)

This section describes the requirements and configuration details for each supported data source.

To collect data using the Falcon SIEM Connector, you need the file path where the connector stores event data received from the Event Streams.

This is the same as the output_path setting in the cs.falconhoseclient.cfg configuration file.

The integration supports only JSON output format from the Falcon SIEM Connector. Other formats such as Syslog and CEF are not supported.

Additionally, this integration collects logs only through the file system. Ingestion using a Syslog server is not supported.

The log files are written to multiple rotated output files based on the output_path setting in the cs.falconhoseclient.cfg file. The default output location for the Falcon SIEM Connector is /var/log/crowdstrike/falconhoseclient/output.

By default, files named output* in /var/log/crowdstrike/falconhoseclient directory contain valid JSON event data and should be used as the source for ingestion.

Files with names like cs.falconhoseclient-*.log in the same directory are primarily used for logging internal operations of the Falcon SIEM Connector and are not intended to be consumed by this integration.

By default, the configuration file for the Falcon SIEM Connector is located at /opt/crowdstrike/etc/cs.falconhoseclient.cfg, which provides configuration options related to the events collected. The EventTypeCollection and EventSubTypeCollection sections list which event types the connector collects.

The following parameters from your CrowdStrike instance are required:

Client ID

Client Secret

Token URL

API Endpoint URL

CrowdStrike App ID

Required scopes for event streams:

Data Stream Scope Event Stream read: Event streams

You can use the Falcon SIEM Connector as an alternative to the Event Streams API.

The following event types are supported for CrowdStrike Event Streams (whether you use the Falcon SIEM Connector or the Event Streams API):

- CustomerIOCEvent

- DataProtectionDetectionSummaryEvent

- DetectionSummaryEvent

- EppDetectionSummaryEvent

- IncidentSummaryEvent

- UserActivityAuditEvent

- AuthActivityAuditEvent

- FirewallMatchEvent

- RemoteResponseSessionStartEvent

- RemoteResponseSessionEndEvent

- CSPM Streaming events

- CSPM Search events

- IDP Incidents

- IDP Summary events

- Mobile Detection events

- Recon Notification events

- XDR Detection events

- Scheduled Report Notification events

The following parameters from your CrowdStrike instance are required:

Client ID

Client Secret

Token URL

API Endpoint URL

Required scopes for each data stream:

Data Stream Scope Alert read:alert Host read:host Vulnerability read:vulnerability Identity protection assessment read:Identity Protection Assessment, write:Identity Protection GraphQL Identity protection timeline read:Identity Protection Timeline, write:Identity Protection GraphQL

The CrowdStrike Falcon Data Replicator allows CrowdStrike users to replicate data from CrowdStrike managed S3 buckets. When new data is written to S3, notifications are sent to a CrowdStrike-managed SQS queue (using S3 event notifications configured in AWS), so this integration can consume them.

This integration can be used in two ways. It can consume SQS notifications directly from the CrowdStrike managed SQS queue or it can be used in conjunction with the FDR tool that replicates the data to a self-managed S3 bucket and the integration can read from there.

In both cases SQS messages are deleted after they are processed. This allows you to operate more than one Elastic Agent with this integration if needed and not have duplicate events, but it means you cannot ingest the data a second time.

This is the simplest way to setup the integration, and also the default.

You need to set the integration up with the SQS queue URL provided by CrowdStrike FDR.

This option can be used if you want to archive the raw CrowdStrike data.

You need to follow the steps below:

- Create an S3 bucket to receive the logs.

- Create an SQS queue.

- Configure your S3 bucket to send object created notifications to your SQS queue.

- Follow the FDR tool instructions to replicate data to your own S3 bucket.

- Configure the integration to read from your self-managed SQS topic.

While the FDR tool can replicate the files from S3 to your local file system, this integration cannot read those files because they are gzip compressed, and the log file input does not support reading compressed files.

AWS credentials are required for running this integration if you want to use the S3 input.

access_key_id: first part of access key.secret_access_key: second part of access key.session_token: required when using temporary security credentials.credential_profile_name: profile name in shared credentials file.shared_credential_file: directory of the shared credentials file.endpoint: URL of the entry point for an AWS web service.role_arn: AWS IAM Role to assume.

There are three types of AWS credentials that can be used:

- access keys,

- temporary security credentials, and

- IAM role ARN.

AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY are the two parts of access keys.

They are long-term credentials for an IAM user, or the AWS account root user.

See AWS Access Keys and Secret Access Keys

for more details.

Temporary security credentials have a limited lifetime and consist of an

access key ID, a secret access key, and a security token which are typically returned

from GetSessionToken.

MFA-enabled IAM users would need to submit an MFA code

while calling GetSessionToken. default_region identifies the AWS Region

whose servers you want to send your first API request to by default.

This is typically the Region closest to you, but it can be any Region. See Temporary Security Credentials for more details.

sts get-session-token AWS CLI can be used to generate temporary credentials.

For example, with MFA-enabled:

aws> sts get-session-token --serial-number arn:aws:iam::1234:mfa/your-email@example.com --duration-seconds 129600 --token-code 123456

Because temporary security credentials are short term, after they expire, the

user needs to generate new ones and manually update the package configuration in

order to continue collecting aws metrics.

This will cause data loss if the configuration is not updated with new credentials before the old ones expire.

An IAM role is an IAM identity that you can create in your account that has specific permissions that determine what the identity can and cannot do in AWS.

A role does not have standard long-term credentials such as a password or access keys associated with it. Instead, when you assume a role, it provides you with temporary security credentials for your role session. IAM role Amazon Resource Name (ARN) can be used to specify which AWS IAM role to assume to generate temporary credentials.

See AssumeRole API documentation for more details.

- Use access keys: Access keys include

access_key_id,secret_access_keyand/orsession_token. - Use

role_arn:role_arnis used to specify which AWS IAM role to assume for generating temporary credentials. Ifrole_arnis given, the package will check if access keys are given. If not, the package will check for credential profile name. If neither is given, default credential profile will be used.

Ensure credentials are given under either a credential profile or

access keys.

3. Use credential_profile_name and/or shared_credential_file:

If access_key_id, secret_access_key and role_arn are all not given, then

the package will check for credential_profile_name.

If you use different credentials for different tools or applications, you can use profiles to

configure multiple access keys in the same configuration file.

If there is no credential_profile_name given, the default profile will be used.

shared_credential_file is optional to specify the directory of your shared

credentials file.

If it's empty, the default directory will be used.

In Windows, shared credentials file is at C:\Users\<yourUserName>\.aws\credentials.

For Linux, macOS or Unix, the file is located at ~/.aws/credentials.

See Create Shared Credentials File

for more details.

The FDR dataset includes:

- Events generated by the Falcon sensor on your hosts

- DataProtectionDetectionSummaryEvent (Data Protection detection summary)

- File Integrity Monitor: FileIntegrityMonitorRuleMatched and FileIntegrityMonitorRuleMatchedEnriched events

- EppDetectionSummaryEvent (EPP detection summary)

- CSPM: Indicators of Misconfiguration (IOM) and Indicators of Attack (IOA) events

- In Kibana, go to Management > Integrations.

- In the "Search for integrations" search bar, type CrowdStrike.

- Click the CrowdStrike integration from the search results.

- Click the Add CrowdStrike button to add the integration.

- Configure the integration.

- Click Save and Continue to save the integration.

Agentless integrations allow you to collect data without having to manage Elastic Agent in your cloud. They make manual agent deployment unnecessary, so you can focus on your data instead of the agent that collects it. For more information, refer to Agentless integrations and the Agentless integrations FAQ.

Agentless deployments are only supported in Elastic Serverless and Elastic Cloud environments. This functionality is in beta and is subject to change. Beta features are not subject to the support SLA of official GA features.

Elastic Agent must be installed. For more details, check the Elastic Agent installation instructions. You can install only one Elastic Agent per host. Elastic Agent is required to stream data from the AWS SQS, Event Streams API, REST API, or SIEM Connector and ship the data to Elastic, where the events will then be processed using the integration's ingest pipelines.

This error can occur for the following reasons:

- Too many records in the response.

- The pagination token has expired. Tokens expire 120 seconds after a call is made.

To resolve this, adjust the Batch Size setting in the integration to reduce the number of records returned per pagination call.

The option Enable Data Deduplication allows you to avoid consuming duplicate events. By default, this option is set to false, and so duplicate events can be ingested. When this option is enabled, a fingerprint processor is used to calculate a hash from a set of CrowdStrike fields that uniquely identify the event. The hash is assigned to the Elasticsearch _id field that makes the document unique and prevent duplicates.

If duplicate events are ingested, to help find them, the integration's event.id field is populated by concatenating a few CrowdStrike fields that uniquely identify the event. These fields are id, aid, and cid from the CrowdStrike event. The fields are separated with pipe |.

For example, if your CrowdStrike event contains id: 123, aid: 456, and cid: 789 then the event.id would be 123|456|789.

The values used in event.severity are consistent with Elastic Detection Rules.

| Severity Name | event.severity |

|---|---|

| Low, Info or Informational | 21 |

| Medium | 47 |

| High | 73 |

| Critical | 99 |

The integration sets event.severity according to the mapping in the table above. If the severity name is not available from the original document, it is determined from the numeric severity value according to the following table.

| CrowdStrike Severity | Severity Name |

|---|---|

| 0 - 19 | info |

| 20 - 39 | low |

| 40 - 59 | medium |

| 60 - 79 | high |

| 80 - 100 | critical |

In 3.16.2 the FDR lookup transform destination indices and stable aliases were moved out of the logs-* namespace so the empty lookup indices no longer match the default Security Solution logs-* index pattern (which produced "missing the timestamp field @timestamp" warnings on detection rules):

| Before | After |

|---|---|

logs-crowdstrike_lookup.aidmaster |

crowdstrike_lookup.aidmaster |

logs-crowdstrike_lookup.userinfo |

crowdstrike_lookup.userinfo |

logs-crowdstrike_lookup.dest_aidmaster-1 |

crowdstrike_lookup.dest_aidmaster-1 |

logs-crowdstrike_lookup.dest_userinfo-1 |

crowdstrike_lookup.dest_userinfo-1 |

If you wrote custom ES|QL queries, dashboards, or detection rules against the old alias names, update them to the new names. The bundled dashboards have been updated. After upgrading, the old logs-crowdstrike_lookup.* indices and aliases left behind by previous installs are unused and can be safely deleted.

When the integration is installed, a transform maintains the latest host metadata (aidmaster) per host in a lookup index. You can enrich FDR event data with this metadata at query time using ES|QL LOOKUP JOIN on host.id.

Lookup index: crowdstrike_lookup.aidmaster — stable alias for the aidmaster lookup data maintained by the integration transform. The backing destination index is managed by the package and may change when you upgrade; use this alias in queries so joins keep working across versions. The lookup retains only host.id and crowdstrike.info.*; ECS host fields from aidmaster are stored under crowdstrike.info.host.* (e.g. crowdstrike.info.host.hostname, crowdstrike.info.host.cid, crowdstrike.info.host.os_version).

Example ES|QL query:

FROM logs-crowdstrike.fdr-*

| WHERE aws.s3.object.key LIKE "*/data/*"

| LOOKUP JOIN crowdstrike_lookup.aidmaster ON host.id

| KEEP @timestamp, event.action, host.id, crowdstrike.info.host.hostname

| LIMIT 20

Elasticsearch 8.19+ is required for LOOKUP JOIN to resolve an alias. Use crowdstrike_lookup.aidmaster as in the example above. On releases before 8.19, LOOKUP JOIN must target the concrete transform destination index instead: in Kibana go to Stack Management → Transforms, open the CrowdStrike latest aidmaster transform, and use the destination_index name shown there (that name can change with the integration version).

Using enriched fields: Enrichment from the lookup is under the crowdstrike.info.host.* namespace (e.g. crowdstrike.info.host.hostname for hostname, crowdstrike.info.host.cid for customer ID). Use these fields in dashboards and detection rules when building on query-time enrichment.

Ingest-time versus query-time: The FDR integration’s Enrich Host and User Metadata option (enrich_metadata, on by default) uses the Elastic Agent (Filebeat) metadata cache to attach aidmaster and userinfo to events at ingest time. If you rely on query-time host enrichment only (transform + LOOKUP JOIN above), set Enrich Host and User Metadata to Off so host metadata is not applied twice. Turning it off also disables ingest-time enrichment from userinfo; if you still need user fields from userinfo on every document, keep ingest-time enrichment enabled or supplement with a separate query pattern. Disabling Enrich Host and User Metadata automatically makes Keep Original Host and User Metadata option (keep_metadata) ineffective and the metadata events are retained.

A second transform maintains the latest user metadata per host-user pair from UserIdentity and UserLogon sensor events in a lookup index. Unlike userinfo directory data (which requires Falcon Discover and covers only Windows), sensor events are available to all FDR customers on all platforms (Windows, macOS, Linux, ChromeOS). You can enrich FDR events with user metadata at query time using ES|QL LOOKUP JOIN.

Lookup index: crowdstrike_lookup.userinfo — stable alias for the user lookup data maintained by the integration transform. The backing destination index is managed by the package and may change when you upgrade; use this alias in queries so joins keep working across versions. The lookup retains only host_user_key and crowdstrike.info.*; user fields are stored under crowdstrike.info.user.* (e.g. crowdstrike.info.user.name, crowdstrike.info.user.domain, crowdstrike.info.user.logon_type).

Composite join key: Because Unix UIDs are local to each host (the same numeric UID can refer to different users on different machines), the user lookup uses a composite key combining both host.id and user.id. Queries must construct this key with EVAL before joining:

FROM logs-crowdstrike.fdr-*

| WHERE aws.s3.object.key LIKE "*/data/*" OR log.file.path LIKE "*/data/*"

| EVAL host_user_key = CONCAT(host.id, "::", user.id)

| LOOKUP JOIN crowdstrike_lookup.userinfo ON host_user_key

| KEEP @timestamp, event.action, host.id, user.id,

crowdstrike.info.user.name, crowdstrike.info.user.domain

| LIMIT 20

Combined host and user enrichment: Both lookups can be chained in a single query. The aidmaster join does not need to come first — host.id comes from the source index, so the EVAL does not depend on it:

FROM logs-crowdstrike.fdr-*

| WHERE aws.s3.object.key LIKE "*/data/*" OR log.file.path LIKE "*/data/*"

| LOOKUP JOIN crowdstrike_lookup.aidmaster ON host.id

| EVAL host_user_key = CONCAT(host.id, "::", user.id)

| LOOKUP JOIN crowdstrike_lookup.userinfo ON host_user_key

| KEEP @timestamp, event.action, host.id, crowdstrike.info.host.hostname,

user.id, crowdstrike.info.user.name, crowdstrike.info.user.domain

| LIMIT 20

Elasticsearch 8.19+ is required for LOOKUP JOIN to resolve an alias. Use crowdstrike_lookup.userinfo as in the examples above. On releases before 8.19, LOOKUP JOIN must target the concrete transform destination index instead: in Kibana go to Stack Management → Transforms, open the CrowdStrike latest userinfo transform, and use the destination_index name shown there (that name can change with the integration version). If you use both host and user lookups on releases before 8.19, you will need two concrete destination index names — one for aidmaster and one for userinfo — both obtainable from Stack Management → Transforms.

Using enriched fields: Enrichment from the user lookup is under the crowdstrike.info.user.* namespace (e.g. crowdstrike.info.user.name for username, crowdstrike.info.user.domain for UPN domain, crowdstrike.info.user.logon_type for logon type). Use these fields in dashboards and ES|QL detection rules when building on query-time enrichment. Note that detection rules using EQL, threshold, or KQL operate on stored documents and cannot use LOOKUP JOIN — those rule types continue to rely on ingest-time cache enrichment for user metadata.

Ingest-time versus query-time: The same Enrich Host and User Metadata option (enrich_metadata) that controls ingest-time host enrichment also controls ingest-time user enrichment from userinfo directory data. Query-time user enrichment via the transform is additive — it works regardless of whether ingest-time enrichment is enabled. If you rely on query-time enrichment only, set Enrich Host and User Metadata to Off so metadata is not applied twice. If both are active, user metadata may appear under crowdstrike.info.user.* from both the ingest-time cache and the query-time lookup; the values should be consistent but the ingest-time cache is populated from userinfo while the query-time lookup uses sensor events, so field availability may differ.

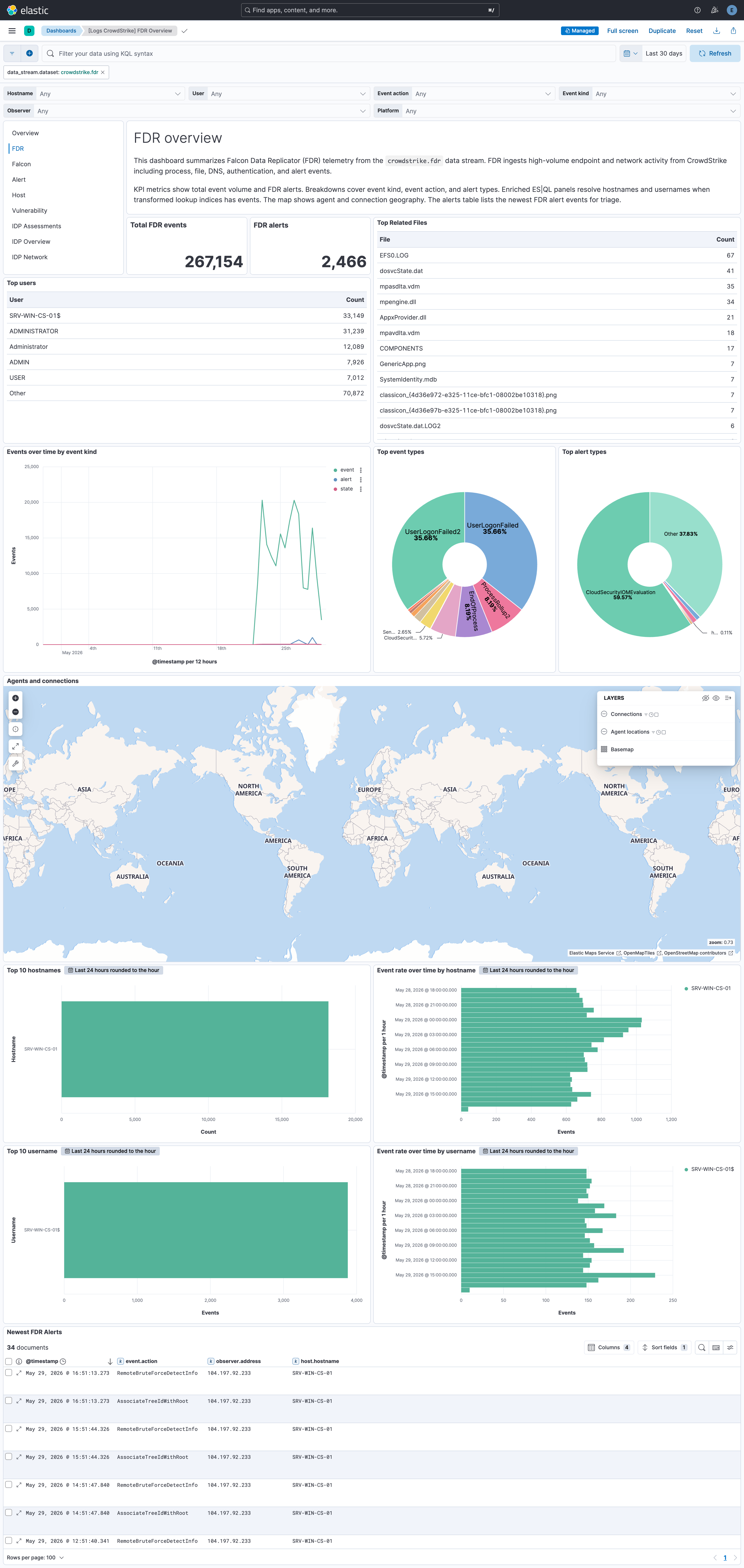

The FDR Overview dashboards include ES|QL visualizations that use LOOKUP JOIN for query-time enrichment. Top 10 hostnames and Event rate over time by hostname join against the aidmaster lookup; Top 10 usernames and Event rate over time by username join against the userinfo lookup. They are reference examples of query-time enrichment that you can reuse in your own dashboards.

ES|QL result row cap: By default, Elasticsearch returns at most 1000 rows for an ES|QL query. If your query does not end with an explicit LIMIT, results are truncated at that cap. See ES|QL limitations for the authoritative limits.

What you can do: Add an explicit | LIMIT … at the end of your ES|QL query when you need more rows than the default. Narrow the time range, filter hosts or indices, or clone a packaged visualization and tune the query for your environment.

This is the alert dataset.

Example

{

"@timestamp": "2023-11-03T18:00:22.328Z",

"agent": {

"ephemeral_id": "efb69ba7-0736-4cf7-a39f-70f3183e7530",

"id": "d541c008-3558-403d-9392-4faa6d42fcb4",

"name": "elastic-agent-43429",

"type": "filebeat",

"version": "8.18.0"

},

"crowdstrike": {

"alert": {

"agent_id": "2ce412d17b334ad4adc8c1c54dbfec4b",

"aggregate_id": "aggind:2ce412d17b334ad4adc8c1c54dbfec4b:163208931778",

"alleged_filetype": "exe",

"cid": "92012896127c4a948236ba7601b886b0",

"cloud_indicator": false,

"cmdline": "\"C:\\Users\\yuvraj.mahajan\\AppData\\Local\\Temp\\Temp3cc4c329-2896-461f-9dea-88009eb2e8fb_pfSenseFirewallOpenVPNClients-20230823T120504Z-001.zip\\pfSenseFirewallOpenVPNClients\\Windows\\openvpn-cds-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe\"",

"composite_id": "92012896127c4a8236ba7601b886b0:ind:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993-5761-42627600",

"confidence": 10,

"context_timestamp": "2023-11-03T18:00:31.000Z",

"control_graph_id": "ctg:2ce4127b334ad4adc8c1c54dbfec4b:163208931778",

"crawl_edge_ids": {

"Sensor": [

"KZcZ=__;K&cmqQ]Z=W,QK4W.9(rBfs\\gfmjTblqI^F-_oNnAWQ&-o0:dR/>>2J<d2T/ji6R&RIHe-tZSkP*q?HW;:leq.:kk)>IVMD36[+=kiQDRm.bB?;d\"V0JaQlaltC59Iq6nM?6>ZAs+LbOJ9p9A;9'WV9^H3XEMs8N",

"KZcZA__;?\"cmott@m_k)MSZ^+C?.cg<Lga#0@71X07*LY2teE56*16pL[=!bjF7g@0jOQE'jT6RX_F@sr#RP-U/d[#nm9A,A,W%cl/T@<WalY1K_h%QDBBF;_e7S!!*'!",

"KZd)iK2;s\\ckQl_P*d=Mo?^a7/JKc\\*L48169!7I5;0\\<H^hNG\"ZQ3#U3\"eo<>92t[f!>*b9WLY@H!V0N,BJsNSTD:?/+fY';e<OHh9AmlT?5<gGqK:*L99kat+P)eZ$HR\"Ql@Q!!!$!rr",

"N6=Ks_B9Bncmur)?\\[fV$k/N5;:6@aB$P;R$2XAaPJ?E<G5,UfaP')8#2AY4ff+q?T?b0/RBi-YAeGmb<6Bqp[DZh#I(jObGkjJJaMf\\:#mb;BM\\L[g!\\F*M!!*'!",

"N6B%O'=_7d#%u&d[+LTNDs<3307?8n=GrFI:4YYGCL,cIt-Tuj!&<6:3RbCuNjL#gW&=)E4^/'fp*.bFX@p_$,R6.\"=lV*T*5Vfc.:nkd$+YD:DJ,Ls0[sArC')K%YTc$:@kUQW5s8N",

"N6B%s!\\k)ed$F6>a%iM\"<FTSe/eH8M:<9gf;$$.b??kpC*99aX!Lq:g6:Q3@Ga4Zrb@MaMa]L'YAt$IFBu])\"H^sF$r7gDPf6&CHpVKO3<DgK9,Y/e@V\"b&m!<<'",

"N6CU&%VT\"d$=67=h\\I)/BJH:8-lS!.%\\-!$1@bAhtVO?q4]9'9'haE4N0*-0Uh'-'f',YW3]T=jL3D#N=fJi]Pp-bWej+R9q[%h[p]p26NK8q3b50k9G:.&eM<Qer>__\"59K'R?_='rK/'hA\"r+L5i-*Ut5PI!!*'!",

"N6CUF__;K!d$:[C93.?=/5(5KnM]!L#UbnSY5HOHc#[6A&FE;(naXB4h/OG\"%MDAR=fo41Z]rXc\"J-\\&&V8UW.?I6V*G+,))Ztu_IuCMV#ZJ:QDJ_EjQmjiX#HENY'WD0rVAV$Gl6_+0e:2$8D)):.LUs+8-S$L!!!$!rr",

"N6CUF__;K!d$:\\N43JV0AO56@6D0$!na(s)d.dQ'iI1*uiKt#j?r\"X'\\AtNML2_C__7ic6,8Dc[F<0NTUGtl%HD#?/Y)t8!1X.;G!*FQ9GP-ukQn6I##&$^81(P+hN*-#rf/cUs)Wb\"<_/?I'[##WMh'H[Rcl+!!<<'",

"N6L[G__;K!d\"qhT7k?[D\"Bk:5s%+=>#DM0j$_<r/JG0TCEQ!Ug(be3)&R2JnX+RSqorgC-NCjf6XATBWX(5<L1J1DV>44ZjO9q*d!YLuHhkq!3>3tpi>OPYZp9]5f1#/AlRZL06/I6cl\"d.&=To@9kS!prs8N"

]

},

"crawl_vertex_ids": {

"Sensor": [

"aggind:2ce412d17b334ad4adc8c1c54dbfec4b:163208931778",

"ctg:2ce412d17b334ad4adc8c1c54dbfec4b:163208931778",

"ind:2ce412d17b34ad4adc8c1c54dbfec4b:399748687993-5761-42627600",

"mod:2ce412d17b4ad4adc8c1c54dbfec4b:0b25d56bd2b4d8a6df45beff7be165117fbf7ba6ba2c07744f039143866335e4",

"mod:2ce412d17b4ad4adc8c1c54dbfec4b:b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd",

"mod:2ce412d17b334ad4adc8c1c54dbfec4b:caef4ae19056eeb122a0540508fa8984cea960173ada0dc648cb846d6ef5dd33",

"pid:2ce412d17b33d4adc8c1c54dbfec4b:392734873135",

"pid:2ce412d17b334ad4adc8c1c54dbfec4b:392736520876",

"pid:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993",

"quf:2ce412d17b334ad4adc8c1c54dbfec4b:b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd",

"uid:2ce412d17b334ad4adc8c1c54dbfec4b:S-1-5-21-1909377054-3469629671-4104191496-4425"

]

},

"crawled_timestamp": "2023-11-03T19:00:23.985Z",

"created_timestamp": "2023-11-03T18:01:23.995Z",

"data_domains": [

"Endpoint"

],

"description": "ThisfilemeetstheAdware/PUPAnti-malwareMLalgorithm'slowest-confidencethreshold.",

"device": {

"agent_load_flags": 0,

"agent_local_time": "2023-10-12T03:45:57.753Z",

"agent_version": "7.04.17605.0",

"bios_manufacturer": "ABC",

"bios_version": "F8CN42WW(V2.05)",

"cid": "92012896127c4a948236ba7601b886b0",

"config_id_base": "65994763",

"config_id_build": "17605",

"config_id_platform": 3,

"external_ip": "81.2.69.142",

"first_seen": "2023-04-07T09:36:36.000Z",

"groups": [

"18704e21288243b58e4c76266d38caaf"

],

"hostinfo": {

"active_directory_dn_display": [

"WinComputers",

"WinComputers\\ABC"

],

"domain": "ABC.LOCAL"

},

"hostname": "ABC709-1175",

"id": "2ce412d17b334ad4adc8c1c54dbfec4b",

"last_seen": "2023-11-03T17:51:42.000Z",

"local_ip": "81.2.69.142",

"mac_address": "AB-21-48-61-05-B2",

"machine_domain": "ABC.LOCAL",

"major_version": "10",

"minor_version": "0",

"modified_timestamp": "2023-11-03T17:53:43.000Z",

"os_version": "Windows11",

"ou": [

"ABC",

"WinComputers"

],

"platform_id": "0",

"platform_name": "Windows",

"product_type": "1",

"product_type_desc": "Workstation",

"site_name": "Default-First-Site-Name",

"status": "normal",

"system_manufacturer": "LENOVO",

"system_product_name": "20VE"

},

"falcon_host_link": "https://falcon.us-2.crowdstrike.com/activity-v2/detections/dhjffg:ind:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993-5761-42627600",

"filename": "openvpn-abc-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe",

"filepath": "\\Device\\HarddiskVolume3\\Users\\yuvraj.mahajan\\AppData\\Local\\Temp\\Temp3cc4c329-2896-461f-9dea-88009eb2e8fb_pfSenseFirewallOpenVPNClients-20230823T120504Z-001.zip\\pfSenseFirewallOpenVPNClients\\Windows\\openvpn-cds-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe",

"grandparent_details": {

"cmdline": "C:\\Windows\\system32\\userinit.exe",

"filename": "userinit.exe",

"filepath": "\\Device\\HarddiskVolume3\\Windows\\System32\\userinit.exe",

"local_process_id": "4328",

"md5": "b07f77fd3f9828b2c9d61f8a36609741",

"process_graph_id": "pid:2ce412d17b334ad4adc8c1c54dbfec4b:392734873135",

"process_id": "392734873135",

"sha256": "caef4ae19056eeb122a0540508fa8984cea960173ada0dc648cb846d6ef5dd33",

"timestamp": "2023-10-30T16:49:19.000Z",

"user_graph_id": "uid:2ce412d17b334ad4adc8c1c54dbfec4b:S-1-5-21-1909377054-3469629671-4104191496-4425",

"user_id": "S-1-5-21-1909377054-3469629671-4104191496-4425",

"user_name": "yuvraj.mahajan"

},

"has_script_or_module_ioc": true,

"id": "ind:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993-5761-42627600",

"indicator_id": "ind:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993-5761-42627600",

"ioc_context": [

{

"ioc_description": "\\Device\\HarddiskVolume3\\Users\\yuvraj.mahajan\\AppData\\Local\\Temp\\Temp3cc4c329-2896-461f-9dea-88009eb2e8fb_pfSenseFirewallOpenVPNClients-20230823T120504Z-001.zip\\pfSenseFirewallOpenVPNClients\\Windows\\openvpn-cds-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe",

"ioc_source": "library_load",

"ioc_type": "hash_sha256",

"ioc_value": "b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd",

"md5": "cdf9cfebb400ce89d5b6032bfcdc693b",

"sha256": "b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd",

"type": "module"

}

],

"ioc_values": [

"b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd"

],

"is_synthetic_quarantine_disposition": true,

"local_process_id": "17076",

"logon_domain": "ABSYS",

"md5": "cdf9cfebb400ce89d5b6032bfcdc693b",

"name": "PrewittPupAdwareSensorDetect-Lowest",

"objective": "FalconDetectionMethod",

"parent_details": {

"cmdline": "C:\\WINDOWS\\Explorer.EXE",

"filename": "explorer.exe",

"filepath": "\\Device\\HarddiskVolume3\\Windows\\explorer.exe",

"local_process_id": "1040",

"md5": "8cc3fcdd7d52d2d5221303c213e044ae",

"process_graph_id": "pid:2ce412d17b334ad4adc8c1c54dbfec4b:392736520876",

"process_id": "392736520876",

"sha256": "0b25d56bd2b4d8a6df45beff7be165117fbf7ba6ba2c07744f039143866335e4",

"timestamp": "2023-11-03T18:00:32.000Z",

"user_graph_id": "uid:2ce412d17b334ad4adc8c1c54dbfec4b:S-1-5-21-1909377054-3469629671-4104191496-4425",

"user_id": "S-1-5-21-1909377054-3469629671-4104191496-4425",

"user_name": "mohit.jha"

},

"parent_process_id": "392736520876",

"pattern_disposition": 2176,

"pattern_disposition_description": "Prevention/Quarantine,processwasblockedfromexecutionandquarantinewasattempted.",

"pattern_disposition_details": {

"blocking_unsupported_or_disabled": false,

"bootup_safeguard_enabled": false,

"critical_process_disabled": false,

"detect": false,

"fs_operation_blocked": false,

"handle_operation_downgraded": false,

"inddet_mask": false,

"indicator": false,

"kill_action_failed": false,

"kill_parent": false,

"kill_process": false,

"kill_subprocess": false,

"operation_blocked": false,

"policy_disabled": false,

"process_blocked": true,

"quarantine_file": true,

"quarantine_machine": false,

"registry_operation_blocked": false,

"rooting": false,

"sensor_only": false,

"suspend_parent": false,

"suspend_process": false

},

"pattern_id": "5761",

"platform": "Windows",

"poly_id": "AACSASiWEnxKlIIaw8LWC-8XINBatE2uYZaWqRAAATiEEfPFwhoY4opnh1CQjm0tvUQp4Lu5eOAx29ZVj-qrGrA==",

"process_end_time": "2023-11-03T18:00:21.000Z",

"process_id": "399748687993",

"process_start_time": "2023-11-03T18:00:13.000Z",

"product": "epp",

"quarantined_files": [

{

"filename": "\\Device\\Volume3\\Users\\yuvraj.mahajan\\AppData\\Local\\Temp\\Temp3cc4c329-2896-461f-9dea-88009eb2e8fb_pfSenseFirewallOpenVPNClients-20230823T120504Z-001.zip\\pfSenseFirewallOpenVPNClients\\Windows\\openvpn-cds-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe",

"id": "2ce412d17b334ad4adc8c1c54dbfec4b_b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd",

"sha256": "b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd",

"state": "quarantined"

}

],

"scenario": "NGAV",

"severity": 30,

"severity_name": "low",

"sha1": "0000000000000000000000000000000000000000",

"sha256": "b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd",

"show_in_ui": true,

"source_products": [

"FalconInsight"

],

"source_vendors": [

"CrowdStrike"

],

"status": "new",

"tactic": "MachineLearning",

"tactic_id": "CSTA0004",

"technique": "Adware/PUP",

"technique_id": "CST0000",

"timestamp": "2023-11-03T18:00:22.328Z",

"tree_id": "1931778",

"tree_root": "38687993",

"triggering_process_graph_id": "pid:2ce4124ad4adc8c1c54dbfec4b:399748687993",

"type": "ldt",

"updated_timestamp": "2023-11-03T19:00:23.985Z",

"user_id": "S-1-5-21-1909377054-3469629671-4104191496-4425",

"user_name": "mohit.jha"

}

},

"data_stream": {

"dataset": "crowdstrike.alert",

"namespace": "96581",

"type": "logs"

},

"device": {

"id": "2ce412d17b334ad4adc8c1c54dbfec4b",

"manufacturer": "LENOVO",

"model": {

"name": "20VE"

}

},

"ecs": {

"version": "8.17.0"

},

"elastic_agent": {

"id": "d541c008-3558-403d-9392-4faa6d42fcb4",

"snapshot": true,

"version": "8.18.0"

},

"event": {

"agent_id_status": "verified",

"category": [

"process"

],

"dataset": "crowdstrike.alert",

"id": "ind:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993-5761-42627600",

"ingested": "2025-10-09T10:20:29Z",

"kind": "alert",

"original": "{\"agent_id\":\"2ce412d17b334ad4adc8c1c54dbfec4b\",\"aggregate_id\":\"aggind:2ce412d17b334ad4adc8c1c54dbfec4b:163208931778\",\"alleged_filetype\":\"exe\",\"cid\":\"92012896127c4a948236ba7601b886b0\",\"cloud_indicator\":\"false\",\"cmdline\":\"\\\"C:\\\\Users\\\\yuvraj.mahajan\\\\AppData\\\\Local\\\\Temp\\\\Temp3cc4c329-2896-461f-9dea-88009eb2e8fb_pfSenseFirewallOpenVPNClients-20230823T120504Z-001.zip\\\\pfSenseFirewallOpenVPNClients\\\\Windows\\\\openvpn-cds-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe\\\"\",\"composite_id\":\"92012896127c4a8236ba7601b886b0:ind:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993-5761-42627600\",\"confidence\":10,\"context_timestamp\":\"2023-11-03T18:00:31Z\",\"control_graph_id\":\"ctg:2ce4127b334ad4adc8c1c54dbfec4b:163208931778\",\"crawl_edge_ids\":{\"Sensor\":[\"KZcZ=__;K\\u0026cmqQ]Z=W,QK4W.9(rBfs\\\\gfmjTblqI^F-_oNnAWQ\\u0026-o0:dR/\\u003e\\u003e2J\\u003cd2T/ji6R\\u0026RIHe-tZSkP*q?HW;:leq.:kk)\\u003eIVMD36[+=kiQDRm.bB?;d\\\"V0JaQlaltC59Iq6nM?6\\u003eZAs+LbOJ9p9A;9'WV9^H3XEMs8N\",\"KZcZA__;?\\\"cmott@m_k)MSZ^+C?.cg\\u003cLga#0@71X07*LY2teE56*16pL[=!bjF7g@0jOQE'jT6RX_F@sr#RP-U/d[#nm9A,A,W%cl/T@\\u003cWalY1K_h%QDBBF;_e7S!!*'!\",\"KZd)iK2;s\\\\ckQl_P*d=Mo?^a7/JKc\\\\*L48169!7I5;0\\\\\\u003cH^hNG\\\"ZQ3#U3\\\"eo\\u003c\\u003e92t[f!\\u003e*b9WLY@H!V0N,BJsNSTD:?/+fY';e\\u003cOHh9AmlT?5\\u003cgGqK:*L99kat+P)eZ$HR\\\"Ql@Q!!!$!rr\",\"N6=Ks_B9Bncmur)?\\\\[fV$k/N5;:6@aB$P;R$2XAaPJ?E\\u003cG5,UfaP')8#2AY4ff+q?T?b0/RBi-YAeGmb\\u003c6Bqp[DZh#I(jObGkjJJaMf\\\\:#mb;BM\\\\L[g!\\\\F*M!!*'!\",\"N6B%O'=_7d#%u\\u0026d[+LTNDs\\u003c3307?8n=GrFI:4YYGCL,cIt-Tuj!\\u0026\\u003c6:3RbCuNjL#gW\\u0026=)E4^/'fp*.bFX@p_$,R6.\\\"=lV*T*5Vfc.:nkd$+YD:DJ,Ls0[sArC')K%YTc$:@kUQW5s8N\",\"N6B%s!\\\\k)ed$F6\\u003ea%iM\\\"\\u003cFTSe/eH8M:\\u003c9gf;$$.b??kpC*99aX!Lq:g6:Q3@Ga4Zrb@MaMa]L'YAt$IFBu])\\\"H^sF$r7gDPf6\\u0026CHpVKO3\\u003cDgK9,Y/e@V\\\"b\\u0026m!\\u003c\\u003c'\",\"N6CU\\u0026%VT\\\"d$=67=h\\\\I)/BJH:8-lS!.%\\\\-!$1@bAhtVO?q4]9'9'haE4N0*-0Uh'-'f',YW3]T=jL3D#N=fJi]Pp-bWej+R9q[%h[p]p26NK8q3b50k9G:.\\u0026eM\\u003cQer\\u003e__\\\"59K'R?_='rK/'hA\\\"r+L5i-*Ut5PI!!*'!\",\"N6CUF__;K!d$:[C93.?=/5(5KnM]!L#UbnSY5HOHc#[6A\\u0026FE;(naXB4h/OG\\\"%MDAR=fo41Z]rXc\\\"J-\\\\\\u0026\\u0026V8UW.?I6V*G+,))Ztu_IuCMV#ZJ:QDJ_EjQmjiX#HENY'WD0rVAV$Gl6_+0e:2$8D)):.LUs+8-S$L!!!$!rr\",\"N6CUF__;K!d$:\\\\N43JV0AO56@6D0$!na(s)d.dQ'iI1*uiKt#j?r\\\"X'\\\\AtNML2_C__7ic6,8Dc[F\\u003c0NTUGtl%HD#?/Y)t8!1X.;G!*FQ9GP-ukQn6I##\\u0026$^81(P+hN*-#rf/cUs)Wb\\\"\\u003c_/?I'[##WMh'H[Rcl+!!\\u003c\\u003c'\",\"N6L[G__;K!d\\\"qhT7k?[D\\\"Bk:5s%+=\\u003e#DM0j$_\\u003cr/JG0TCEQ!Ug(be3)\\u0026R2JnX+RSqorgC-NCjf6XATBWX(5\\u003cL1J1DV\\u003e44ZjO9q*d!YLuHhkq!3\\u003e3tpi\\u003eOPYZp9]5f1#/AlRZL06/I6cl\\\"d.\\u0026=To@9kS!prs8N\"]},\"crawl_vertex_ids\":{\"Sensor\":[\"aggind:2ce412d17b334ad4adc8c1c54dbfec4b:163208931778\",\"ctg:2ce412d17b334ad4adc8c1c54dbfec4b:163208931778\",\"ind:2ce412d17b34ad4adc8c1c54dbfec4b:399748687993-5761-42627600\",\"mod:2ce412d17b4ad4adc8c1c54dbfec4b:0b25d56bd2b4d8a6df45beff7be165117fbf7ba6ba2c07744f039143866335e4\",\"mod:2ce412d17b4ad4adc8c1c54dbfec4b:b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd\",\"mod:2ce412d17b334ad4adc8c1c54dbfec4b:caef4ae19056eeb122a0540508fa8984cea960173ada0dc648cb846d6ef5dd33\",\"pid:2ce412d17b33d4adc8c1c54dbfec4b:392734873135\",\"pid:2ce412d17b334ad4adc8c1c54dbfec4b:392736520876\",\"pid:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993\",\"quf:2ce412d17b334ad4adc8c1c54dbfec4b:b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd\",\"uid:2ce412d17b334ad4adc8c1c54dbfec4b:S-1-5-21-1909377054-3469629671-4104191496-4425\"]},\"crawled_timestamp\":\"2023-11-03T19:00:23.985020992Z\",\"created_timestamp\":\"2023-11-03T18:01:23.995794943Z\",\"data_domains\":[\"Endpoint\"],\"description\":\"ThisfilemeetstheAdware/PUPAnti-malwareMLalgorithm'slowest-confidencethreshold.\",\"device\":{\"agent_load_flags\":\"0\",\"agent_local_time\":\"2023-10-12T03:45:57.753Z\",\"agent_version\":\"7.04.17605.0\",\"bios_manufacturer\":\"ABC\",\"bios_version\":\"F8CN42WW(V2.05)\",\"cid\":\"92012896127c4a948236ba7601b886b0\",\"config_id_base\":\"65994763\",\"config_id_build\":\"17605\",\"config_id_platform\":\"3\",\"device_id\":\"2ce412d17b334ad4adc8c1c54dbfec4b\",\"external_ip\":\"81.2.69.142\",\"first_seen\":\"2023-04-07T09:36:36Z\",\"groups\":[\"18704e21288243b58e4c76266d38caaf\"],\"hostinfo\":{\"active_directory_dn_display\":[\"WinComputers\",\"WinComputers\\\\ABC\"],\"domain\":\"ABC.LOCAL\"},\"hostname\":\"ABC709-1175\",\"last_seen\":\"2023-11-03T17:51:42Z\",\"local_ip\":\"81.2.69.142\",\"mac_address\":\"ab-21-48-61-05-b2\",\"machine_domain\":\"ABC.LOCAL\",\"major_version\":\"10\",\"minor_version\":\"0\",\"modified_timestamp\":\"2023-11-03T17:53:43Z\",\"os_version\":\"Windows11\",\"ou\":[\"ABC\",\"WinComputers\"],\"platform_id\":\"0\",\"platform_name\":\"Windows\",\"pod_labels\":null,\"product_type\":\"1\",\"product_type_desc\":\"Workstation\",\"site_name\":\"Default-First-Site-Name\",\"status\":\"normal\",\"system_manufacturer\":\"LENOVO\",\"system_product_name\":\"20VE\"},\"falcon_host_link\":\"https://falcon.us-2.crowdstrike.com/activity-v2/detections/dhjffg:ind:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993-5761-42627600\",\"filename\":\"openvpn-abc-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe\",\"filepath\":\"\\\\Device\\\\HarddiskVolume3\\\\Users\\\\yuvraj.mahajan\\\\AppData\\\\Local\\\\Temp\\\\Temp3cc4c329-2896-461f-9dea-88009eb2e8fb_pfSenseFirewallOpenVPNClients-20230823T120504Z-001.zip\\\\pfSenseFirewallOpenVPNClients\\\\Windows\\\\openvpn-cds-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe\",\"grandparent_details\":{\"cmdline\":\"C:\\\\Windows\\\\system32\\\\userinit.exe\",\"filename\":\"userinit.exe\",\"filepath\":\"\\\\Device\\\\HarddiskVolume3\\\\Windows\\\\System32\\\\userinit.exe\",\"local_process_id\":\"4328\",\"md5\":\"b07f77fd3f9828b2c9d61f8a36609741\",\"process_graph_id\":\"pid:2ce412d17b334ad4adc8c1c54dbfec4b:392734873135\",\"process_id\":\"392734873135\",\"sha256\":\"caef4ae19056eeb122a0540508fa8984cea960173ada0dc648cb846d6ef5dd33\",\"timestamp\":\"2023-10-30T16:49:19Z\",\"user_graph_id\":\"uid:2ce412d17b334ad4adc8c1c54dbfec4b:S-1-5-21-1909377054-3469629671-4104191496-4425\",\"user_id\":\"S-1-5-21-1909377054-3469629671-4104191496-4425\",\"user_name\":\"yuvraj.mahajan\"},\"has_script_or_module_ioc\":\"true\",\"id\":\"ind:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993-5761-42627600\",\"indicator_id\":\"ind:2ce412d17b334ad4adc8c1c54dbfec4b:399748687993-5761-42627600\",\"ioc_context\":[{\"ioc_description\":\"\\\\Device\\\\HarddiskVolume3\\\\Users\\\\yuvraj.mahajan\\\\AppData\\\\Local\\\\Temp\\\\Temp3cc4c329-2896-461f-9dea-88009eb2e8fb_pfSenseFirewallOpenVPNClients-20230823T120504Z-001.zip\\\\pfSenseFirewallOpenVPNClients\\\\Windows\\\\openvpn-cds-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe\",\"ioc_source\":\"library_load\",\"ioc_type\":\"hash_sha256\",\"ioc_value\":\"b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd\",\"md5\":\"cdf9cfebb400ce89d5b6032bfcdc693b\",\"sha256\":\"b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd\",\"type\":\"module\"}],\"ioc_values\":[],\"is_synthetic_quarantine_disposition\":true,\"local_process_id\":\"17076\",\"logon_domain\":\"ABSYS\",\"md5\":\"cdf9cfebb400ce89d5b6032bfcdc693b\",\"name\":\"PrewittPupAdwareSensorDetect-Lowest\",\"objective\":\"FalconDetectionMethod\",\"parent_details\":{\"cmdline\":\"C:\\\\WINDOWS\\\\Explorer.EXE\",\"filename\":\"explorer.exe\",\"filepath\":\"\\\\Device\\\\HarddiskVolume3\\\\Windows\\\\explorer.exe\",\"local_process_id\":\"1040\",\"md5\":\"8cc3fcdd7d52d2d5221303c213e044ae\",\"process_graph_id\":\"pid:2ce412d17b334ad4adc8c1c54dbfec4b:392736520876\",\"process_id\":\"392736520876\",\"sha256\":\"0b25d56bd2b4d8a6df45beff7be165117fbf7ba6ba2c07744f039143866335e4\",\"timestamp\":\"2023-11-03T18:00:32Z\",\"user_graph_id\":\"uid:2ce412d17b334ad4adc8c1c54dbfec4b:S-1-5-21-1909377054-3469629671-4104191496-4425\",\"user_id\":\"S-1-5-21-1909377054-3469629671-4104191496-4425\",\"user_name\":\"mohit.jha\"},\"parent_process_id\":\"392736520876\",\"pattern_disposition\":2176,\"pattern_disposition_description\":\"Prevention/Quarantine,processwasblockedfromexecutionandquarantinewasattempted.\",\"pattern_disposition_details\":{\"blocking_unsupported_or_disabled\":false,\"bootup_safeguard_enabled\":false,\"critical_process_disabled\":false,\"detect\":false,\"fs_operation_blocked\":false,\"handle_operation_downgraded\":false,\"inddet_mask\":false,\"indicator\":false,\"kill_action_failed\":false,\"kill_parent\":false,\"kill_process\":false,\"kill_subprocess\":false,\"operation_blocked\":false,\"policy_disabled\":false,\"process_blocked\":true,\"quarantine_file\":true,\"quarantine_machine\":false,\"registry_operation_blocked\":false,\"rooting\":false,\"sensor_only\":false,\"suspend_parent\":false,\"suspend_process\":false},\"pattern_id\":5761,\"platform\":\"Windows\",\"poly_id\":\"AACSASiWEnxKlIIaw8LWC-8XINBatE2uYZaWqRAAATiEEfPFwhoY4opnh1CQjm0tvUQp4Lu5eOAx29ZVj-qrGrA==\",\"process_end_time\":\"1699034421\",\"process_id\":\"399748687993\",\"process_start_time\":\"1699034413\",\"product\":\"epp\",\"quarantined_files\":[{\"filename\":\"\\\\Device\\\\Volume3\\\\Users\\\\yuvraj.mahajan\\\\AppData\\\\Local\\\\Temp\\\\Temp3cc4c329-2896-461f-9dea-88009eb2e8fb_pfSenseFirewallOpenVPNClients-20230823T120504Z-001.zip\\\\pfSenseFirewallOpenVPNClients\\\\Windows\\\\openvpn-cds-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe\",\"id\":\"2ce412d17b334ad4adc8c1c54dbfec4b_b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd\",\"sha256\":\"b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd\",\"state\":\"quarantined\"}],\"scenario\":\"NGAV\",\"severity\":30,\"sha1\":\"0000000000000000000000000000000000000000\",\"sha256\":\"b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd\",\"show_in_ui\":true,\"source_products\":[\"FalconInsight\"],\"source_vendors\":[\"CrowdStrike\"],\"status\":\"new\",\"tactic\":\"MachineLearning\",\"tactic_id\":\"CSTA0004\",\"technique\":\"Adware/PUP\",\"technique_id\":\"CST0000\",\"timestamp\":\"2023-11-03T18:00:22.328Z\",\"tree_id\":\"1931778\",\"tree_root\":\"38687993\",\"triggering_process_graph_id\":\"pid:2ce4124ad4adc8c1c54dbfec4b:399748687993\",\"type\":\"ldt\",\"updated_timestamp\":\"2023-11-03T19:00:23.985007341Z\",\"user_id\":\"S-1-5-21-1909377054-3469629671-4104191496-4425\",\"user_name\":\"mohit.jha\"}",

"severity": 21,

"type": [

"start"

]

},

"file": {

"name": "openvpn-abc-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe",

"path": "\\Device\\HarddiskVolume3\\Users\\yuvraj.mahajan\\AppData\\Local\\Temp\\Temp3cc4c329-2896-461f-9dea-88009eb2e8fb_pfSenseFirewallOpenVPNClients-20230823T120504Z-001.zip\\pfSenseFirewallOpenVPNClients\\Windows\\openvpn-cds-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe"

},

"host": {

"domain": "ABC.LOCAL",

"hostname": "ABC709-1175",

"id": "2ce412d17b334ad4adc8c1c54dbfec4b",

"ip": [

"81.2.69.142"

],

"mac": [

"AB-21-48-61-05-B2"

],

"os": {

"full": "Windows11",

"platform": "Windows",

"type": "windows"

}

},

"input": {

"type": "cel"

},

"message": "ThisfilemeetstheAdware/PUPAnti-malwareMLalgorithm'slowest-confidencethreshold.",

"process": {

"end": "2023-11-03T18:00:21.000Z",

"entity_id": "399748687993",

"executable": "\\Device\\HarddiskVolume3\\Users\\yuvraj.mahajan\\AppData\\Local\\Temp\\Temp3cc4c329-2896-461f-9dea-88009eb2e8fb_pfSenseFirewallOpenVPNClients-20230823T120504Z-001.zip\\pfSenseFirewallOpenVPNClients\\Windows\\openvpn-cds-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe",

"hash": {

"md5": "cdf9cfebb400ce89d5b6032bfcdc693b",

"sha1": "0000000000000000000000000000000000000000",

"sha256": "b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd"

},

"name": "openvpn-abc-pfSense-UDP4-1194-pfsense-install-2.6.5-I001-amd64.exe",

"parent": {

"command_line": "C:\\WINDOWS\\Explorer.EXE",

"entity_id": "392736520876",

"executable": "\\Device\\HarddiskVolume3\\Windows\\explorer.exe",

"hash": {

"md5": "8cc3fcdd7d52d2d5221303c213e044ae",

"sha256": "0b25d56bd2b4d8a6df45beff7be165117fbf7ba6ba2c07744f039143866335e4"

},

"name": "explorer.exe",

"pid": 392736520876

},

"pid": 399748687993,

"start": "2023-11-03T18:00:13.000Z",

"user": {

"id": "S-1-5-21-1909377054-3469629671-4104191496-4425",

"name": "mohit.jha"

}

},

"related": {

"hash": [

"b07f77fd3f9828b2c9d61f8a36609741",

"cdf9cfebb400ce89d5b6032bfcdc693b",

"b26a6791b72753d2317efd5e1363d93fdd33e611c8b9e08a3b24ea4d755b81fd",

"8cc3fcdd7d52d2d5221303c213e044ae",

"0b25d56bd2b4d8a6df45beff7be165117fbf7ba6ba2c07744f039143866335e4",

"0000000000000000000000000000000000000000"

],

"hosts": [

"ABC.LOCAL",

"ABC709-1175"

],

"ip": [

"81.2.69.142"

],

"user": [

"uid:2ce412d17b334ad4adc8c1c54dbfec4b:S-1-5-21-1909377054-3469629671-4104191496-4425",

"S-1-5-21-1909377054-3469629671-4104191496-4425",

"yuvraj.mahajan",

"mohit.jha"

]

},

"tags": [

"preserve_original_event",

"preserve_duplicate_custom_fields",

"forwarded",

"crowdstrike-alert"

],

"threat": {

"framework": "CrowdStrike Falcon Detections Framework",

"tactic": {

"id": [

"CSTA0004"

],

"name": [

"MachineLearning"

]

},

"technique": {

"id": [

"CST0000"

],

"name": [

"Adware/PUP"

]

}

},

"user": {

"id": "S-1-5-21-1909377054-3469629671-4104191496-4425",

"name": "mohit.jha"

}

}

Exported fields

| Field | Description | Type |

|---|---|---|

| @timestamp | Event timestamp. | date |

| crowdstrike.alert.active_directory_authentication_method | long | |

| crowdstrike.alert.activity.browser | keyword | |

| crowdstrike.alert.activity.device | keyword | |

| crowdstrike.alert.activity.id | keyword | |

| crowdstrike.alert.activity.os | keyword | |

| crowdstrike.alert.agent_id | keyword | |

| crowdstrike.alert.agent_scan_id | keyword | |

| crowdstrike.alert.aggregate_id | keyword | |

| crowdstrike.alert.alert_attributes | long | |

| crowdstrike.alert.alleged_filetype | keyword | |

| crowdstrike.alert.assigned_to.name | keyword | |

| crowdstrike.alert.assigned_to.uid | keyword | |

| crowdstrike.alert.assigned_to.uuid | keyword | |

| crowdstrike.alert.associated_files.filepath | keyword | |

| crowdstrike.alert.associated_files.sha256 | keyword | |

| crowdstrike.alert.child_process_ids | keyword | |

| crowdstrike.alert.cid | keyword | |

| crowdstrike.alert.cloud_indicator | boolean | |

| crowdstrike.alert.cmdline | keyword | |

| crowdstrike.alert.command_line | keyword | |

| crowdstrike.alert.comment | keyword | |

| crowdstrike.alert.composite_id | keyword | |

| crowdstrike.alert.confidence | long | |

| crowdstrike.alert.context_timestamp | date | |

| crowdstrike.alert.control_graph_id | keyword | |

| crowdstrike.alert.crawl_edge_ids.Sensor | keyword | |

| crowdstrike.alert.crawl_vertex_ids.Sensor | keyword | |

| crowdstrike.alert.crawled_timestamp | date | |

| crowdstrike.alert.created_timestamp | date | |

| crowdstrike.alert.data_domains | keyword | |

| crowdstrike.alert.description | keyword | |

| crowdstrike.alert.detect_type | keyword | |

| crowdstrike.alert.detection_context | flattened | |

| crowdstrike.alert.device.agent_load_flags | long | |

| crowdstrike.alert.device.agent_local_time | date | |

| crowdstrike.alert.device.agent_version | keyword | |

| crowdstrike.alert.device.bios_manufacturer | keyword | |

| crowdstrike.alert.device.bios_version | keyword | |

| crowdstrike.alert.device.cid | keyword | |

| crowdstrike.alert.device.config_id_base | keyword | |

| crowdstrike.alert.device.config_id_build | keyword | |

| crowdstrike.alert.device.config_id_platform | long | |

| crowdstrike.alert.device.external_ip | ip | |

| crowdstrike.alert.device.first_seen | date | |

| crowdstrike.alert.device.groups | keyword | |

| crowdstrike.alert.device.hostinfo.active_directory_dn_display | keyword | |

| crowdstrike.alert.device.hostinfo.domain | keyword | |

| crowdstrike.alert.device.hostname | keyword | |

| crowdstrike.alert.device.id | keyword | |

| crowdstrike.alert.device.last_seen | date | |

| crowdstrike.alert.device.local_ip | ip | |

| crowdstrike.alert.device.mac_address | keyword | |

| crowdstrike.alert.device.machine_domain | keyword | |

| crowdstrike.alert.device.major_version | keyword | |

| crowdstrike.alert.device.minor_version | keyword | |

| crowdstrike.alert.device.modified_timestamp | date | |

| crowdstrike.alert.device.os_version | keyword | |

| crowdstrike.alert.device.ou | keyword | |

| crowdstrike.alert.device.platform_id | keyword | |

| crowdstrike.alert.device.platform_name | keyword | |

| crowdstrike.alert.device.pod_labels | keyword | |

| crowdstrike.alert.device.product_type | keyword | |

| crowdstrike.alert.device.product_type_desc | keyword | |

| crowdstrike.alert.device.site_name | keyword | |

| crowdstrike.alert.device.status | keyword | |

| crowdstrike.alert.device.system_manufacturer | keyword | |

| crowdstrike.alert.device.system_product_name | keyword | |

| crowdstrike.alert.device.tags | keyword | |

| crowdstrike.alert.display_name | keyword | |

| crowdstrike.alert.documents_accessed.filename | keyword | |

| crowdstrike.alert.documents_accessed.filepath | keyword | |

| crowdstrike.alert.documents_accessed.timestamp | date | |

| crowdstrike.alert.email_sent | boolean | |

| crowdstrike.alert.end_time | date | |

| crowdstrike.alert.event_id | keyword | |

| crowdstrike.alert.executables_written.filename | keyword | |

| crowdstrike.alert.executables_written.filepath | keyword | |

| crowdstrike.alert.executables_written.timestamp | date | |

| crowdstrike.alert.falcon_host_link | keyword | |

| crowdstrike.alert.file_writes.name | keyword | |

| crowdstrike.alert.file_writes.sha256 | keyword | |

| crowdstrike.alert.filename | keyword | |

| crowdstrike.alert.filepath | keyword | |

| crowdstrike.alert.files_accessed.filename | keyword | |

| crowdstrike.alert.files_accessed.filepath | keyword | |

| crowdstrike.alert.files_accessed.timestamp | date | |

| crowdstrike.alert.files_written.filename | keyword | |

| crowdstrike.alert.files_written.filepath | keyword | |

| crowdstrike.alert.files_written.timestamp | date | |

| crowdstrike.alert.global_prevalence | keyword | |

| crowdstrike.alert.grandparent_details.cmdline | keyword | |

| crowdstrike.alert.grandparent_details.filename | keyword | |

| crowdstrike.alert.grandparent_details.filepath | keyword | |

| crowdstrike.alert.grandparent_details.local_process_id | keyword | |

| crowdstrike.alert.grandparent_details.md5 | keyword | |

| crowdstrike.alert.grandparent_details.process_graph_id | keyword | |

| crowdstrike.alert.grandparent_details.process_id | keyword | |

| crowdstrike.alert.grandparent_details.sha256 | keyword | |

| crowdstrike.alert.grandparent_details.timestamp | date | |

| crowdstrike.alert.grandparent_details.user_graph_id | keyword | |

| crowdstrike.alert.grandparent_details.user_id | keyword | |

| crowdstrike.alert.grandparent_details.user_name | keyword | |

| crowdstrike.alert.has_script_or_module_ioc | boolean | |

| crowdstrike.alert.host_name | keyword | |

| crowdstrike.alert.host_type | keyword | |

| crowdstrike.alert.id | keyword | |

| crowdstrike.alert.idp_policy.enforced_externally | long | |

| crowdstrike.alert.idp_policy.mfa_factor_type | long | |

| crowdstrike.alert.idp_policy.mfa_provider | long | |

| crowdstrike.alert.idp_policy.rule_action | long | |

| crowdstrike.alert.idp_policy.rule_id | keyword | |

| crowdstrike.alert.idp_policy.rule_name | keyword | |

| crowdstrike.alert.idp_policy.rule_trigger | long | |

| crowdstrike.alert.image_file_name | keyword | |

| crowdstrike.alert.incident.created | date | |

| crowdstrike.alert.incident.end | date | |

| crowdstrike.alert.incident.id | keyword | |

| crowdstrike.alert.incident.score | double | |

| crowdstrike.alert.incident.start | date | |

| crowdstrike.alert.indicator_id | keyword | |

| crowdstrike.alert.ioc_context.cmdline | keyword | |

| crowdstrike.alert.ioc_context.ioc_description | keyword | |

| crowdstrike.alert.ioc_context.ioc_source | keyword | |

| crowdstrike.alert.ioc_context.ioc_type | keyword | |

| crowdstrike.alert.ioc_context.ioc_value | keyword | |

| crowdstrike.alert.ioc_context.md5 | keyword | |

| crowdstrike.alert.ioc_context.sha256 | keyword | |

| crowdstrike.alert.ioc_context.type | keyword | |

| crowdstrike.alert.ioc_description | keyword | |

| crowdstrike.alert.ioc_source | keyword | |

| crowdstrike.alert.ioc_type | keyword | |

| crowdstrike.alert.ioc_value | keyword | |

| crowdstrike.alert.ioc_values | keyword | |

| crowdstrike.alert.is_synthetic_quarantine_disposition | boolean | |

| crowdstrike.alert.ldap_search_query_attack | long | |

| crowdstrike.alert.local_prevalence | keyword | |

| crowdstrike.alert.local_process_id | keyword | |

| crowdstrike.alert.location_country_code | keyword | |

| crowdstrike.alert.location_latitude_as_int | long | |

| crowdstrike.alert.location_longitude_as_int | long | |

| crowdstrike.alert.logon_domain | keyword | |

| crowdstrike.alert.md5 | keyword | |

| crowdstrike.alert.mitre_attack.pattern_id | keyword | |

| crowdstrike.alert.mitre_attack.tactic | keyword | |

| crowdstrike.alert.mitre_attack.tactic_id | keyword | |

| crowdstrike.alert.mitre_attack.technique | keyword | |

| crowdstrike.alert.mitre_attack.technique_id | keyword | |

| crowdstrike.alert.model_anomaly_indicators | keyword | |

| crowdstrike.alert.name | keyword | |

| crowdstrike.alert.network_accesses.access_timestamp | date | |

| crowdstrike.alert.network_accesses.access_type | long | |

| crowdstrike.alert.network_accesses.connection_direction | keyword | |

| crowdstrike.alert.network_accesses.isIPV6 | boolean | |

| crowdstrike.alert.network_accesses.local_address | ip | |

| crowdstrike.alert.network_accesses.local_port | long | |

| crowdstrike.alert.network_accesses.protocol | keyword | |

| crowdstrike.alert.network_accesses.remote_address | ip | |

| crowdstrike.alert.network_accesses.remote_port | long | |

| crowdstrike.alert.objective | keyword | |

| crowdstrike.alert.operating_system | keyword | |

| crowdstrike.alert.os_name | keyword | |

| crowdstrike.alert.overwatch_note | keyword | |

| crowdstrike.alert.overwatch_note_timestamp | date | |

| crowdstrike.alert.parent_details.cmdline | keyword | |

| crowdstrike.alert.parent_details.filename | keyword | |

| crowdstrike.alert.parent_details.filepath | keyword | |

| crowdstrike.alert.parent_details.local_process_id | keyword | |

| crowdstrike.alert.parent_details.md5 | keyword | |

| crowdstrike.alert.parent_details.process_graph_id | keyword | |

| crowdstrike.alert.parent_details.process_id | keyword | |

| crowdstrike.alert.parent_details.sha256 | keyword | |

| crowdstrike.alert.parent_details.timestamp | date | |

| crowdstrike.alert.parent_details.user_graph_id | keyword | |

| crowdstrike.alert.parent_details.user_id | keyword | |

| crowdstrike.alert.parent_details.user_name | keyword | |

| crowdstrike.alert.parent_process_id | keyword | |

| crowdstrike.alert.pattern_disposition | long | |

| crowdstrike.alert.pattern_disposition_description | keyword | |

| crowdstrike.alert.pattern_disposition_details.blocking_unsupported_or_disabled | boolean | |

| crowdstrike.alert.pattern_disposition_details.bootup_safeguard_enabled | boolean | |

| crowdstrike.alert.pattern_disposition_details.containment_file_system | boolean | |

| crowdstrike.alert.pattern_disposition_details.critical_process_disabled | boolean | |

| crowdstrike.alert.pattern_disposition_details.detect | boolean | |

| crowdstrike.alert.pattern_disposition_details.fs_operation_blocked | boolean | |

| crowdstrike.alert.pattern_disposition_details.handle_operation_downgraded | boolean | |

| crowdstrike.alert.pattern_disposition_details.inddet_mask | boolean | |

| crowdstrike.alert.pattern_disposition_details.indicator | boolean | |

| crowdstrike.alert.pattern_disposition_details.kill_action_failed | boolean | |

| crowdstrike.alert.pattern_disposition_details.kill_parent | boolean | |

| crowdstrike.alert.pattern_disposition_details.kill_process | boolean | |

| crowdstrike.alert.pattern_disposition_details.kill_subprocess | boolean | |

| crowdstrike.alert.pattern_disposition_details.mfa_required | boolean | |

| crowdstrike.alert.pattern_disposition_details.operation_blocked | boolean | |

| crowdstrike.alert.pattern_disposition_details.policy_disabled | boolean | |

| crowdstrike.alert.pattern_disposition_details.prevention_provisioning_enabled | boolean | |

| crowdstrike.alert.pattern_disposition_details.process_blocked | boolean | |

| crowdstrike.alert.pattern_disposition_details.quarantine_file | boolean | |

| crowdstrike.alert.pattern_disposition_details.quarantine_machine | boolean | |

| crowdstrike.alert.pattern_disposition_details.registry_operation_blocked | boolean | |

| crowdstrike.alert.pattern_disposition_details.response_action_already_applied | boolean | |

| crowdstrike.alert.pattern_disposition_details.response_action_failed | boolean | |

| crowdstrike.alert.pattern_disposition_details.response_action_triggered | boolean | |

| crowdstrike.alert.pattern_disposition_details.rooting | boolean | |

| crowdstrike.alert.pattern_disposition_details.sensor_only | boolean | |

| crowdstrike.alert.pattern_disposition_details.suspend_parent | boolean | |

| crowdstrike.alert.pattern_disposition_details.suspend_process | boolean | |

| crowdstrike.alert.pattern_id | keyword | |

| crowdstrike.alert.platform | keyword | |

| crowdstrike.alert.poly_id | keyword | |

| crowdstrike.alert.prevented | boolean | |

| crowdstrike.alert.process_end_time | date | |

| crowdstrike.alert.process_id | keyword | |

| crowdstrike.alert.process_start_time | date | |

| crowdstrike.alert.product | keyword | |

| crowdstrike.alert.protocol_anomaly_classification | long | |

| crowdstrike.alert.quarantined | boolean | |

| crowdstrike.alert.quarantined_files.filename | keyword | |

| crowdstrike.alert.quarantined_files.id | keyword | |

| crowdstrike.alert.quarantined_files.sha256 | keyword | |

| crowdstrike.alert.quarantined_files.state | keyword | |

| crowdstrike.alert.rule_group_id | keyword | |

| crowdstrike.alert.rule_group_name | keyword | |

| crowdstrike.alert.rule_instance_created_by | keyword | |

| crowdstrike.alert.rule_instance_id | keyword | |

| crowdstrike.alert.rule_instance_name | keyword | |

| crowdstrike.alert.rule_instance_version | keyword | |

| crowdstrike.alert.scan_id | keyword | |

| crowdstrike.alert.scenario | keyword | |

| crowdstrike.alert.seconds_to_resolved | long | |

| crowdstrike.alert.seconds_to_triaged | long | |

| crowdstrike.alert.severity | long | |

| crowdstrike.alert.severity_name | keyword | |

| crowdstrike.alert.sha1 | keyword | |

| crowdstrike.alert.sha256 | keyword | |

| crowdstrike.alert.show_in_ui | boolean | |

| crowdstrike.alert.source.account_azure_id | keyword | |

| crowdstrike.alert.source.account_domain | keyword | |

| crowdstrike.alert.source.account_name | keyword | |

| crowdstrike.alert.source.account_object_guid | keyword | |

| crowdstrike.alert.source.account_object_sid | keyword | |

| crowdstrike.alert.source.account_sam_account_name | keyword | |

| crowdstrike.alert.source.account_upn | keyword | |

| crowdstrike.alert.source.endpoint_account_object_guid | keyword | |

| crowdstrike.alert.source.endpoint_account_object_sid | keyword | |

| crowdstrike.alert.source.endpoint_address_ip4 | ip | |

| crowdstrike.alert.source.endpoint_host_name | keyword | |

| crowdstrike.alert.source.endpoint_ip_address | ip | |

| crowdstrike.alert.source.endpoint_ip_reputation | long | |

| crowdstrike.alert.source.endpoint_sensor_id | keyword | |

| crowdstrike.alert.source.ip_isp_classification | long | |

| crowdstrike.alert.source.ip_isp_domain | keyword | |

| crowdstrike.alert.source_products | keyword | |

| crowdstrike.alert.source_vendors | keyword | |

| crowdstrike.alert.start_time | date | |

| crowdstrike.alert.status | keyword | |

| crowdstrike.alert.tactic | keyword | |

| crowdstrike.alert.tactic_id | keyword | |

| crowdstrike.alert.tags | keyword | |

| crowdstrike.alert.target.account_name | keyword | |

| crowdstrike.alert.target.domain_controller_host_name | keyword | |

| crowdstrike.alert.target.domain_controller_object_guid | keyword | |

| crowdstrike.alert.target.domain_controller_object_sid | keyword | |

| crowdstrike.alert.target.endpoint_account_object_guid | keyword | |

| crowdstrike.alert.target.endpoint_account_object_sid | keyword | |

| crowdstrike.alert.target.endpoint_host_name | keyword | |

| crowdstrike.alert.target.endpoint_sensor_id | keyword | |

| crowdstrike.alert.target.service_access_identifier | keyword | |

| crowdstrike.alert.technique | keyword | |

| crowdstrike.alert.technique_id | keyword | |

| crowdstrike.alert.template_instance_id | keyword | |

| crowdstrike.alert.timestamp | date | |

| crowdstrike.alert.tree_id | keyword | |

| crowdstrike.alert.tree_root | keyword | |

| crowdstrike.alert.triggering_process_graph_id | keyword | |

| crowdstrike.alert.type | keyword | |

| crowdstrike.alert.updated_timestamp | date | |

| crowdstrike.alert.user_id | keyword | |

| crowdstrike.alert.user_name | keyword | |

| crowdstrike.alert.user_principal | keyword | |

| crowdstrike.alert.worker_node_name | keyword | |

| data_stream.dataset | Data stream dataset. | constant_keyword |

| data_stream.namespace | Data stream namespace. | constant_keyword |

| data_stream.type | Data stream type. | constant_keyword |

| event.dataset | Event dataset. | constant_keyword |

| event.module | Event module. | constant_keyword |

| input.type | Type of filebeat input. | keyword |

| log.offset | Log offset. | long |

| tags | List of keywords used to tag each event. | keyword |

| threat.framework | Name of the threat framework used to further categorize and classify the tactic and technique of the reported threat. Framework classification can be provided by detecting systems, evaluated at ingest time, or retrospectively tagged to events. | keyword |

| threat.tactic.id | The id of tactic used by this threat. You can use a MITRE ATT&CK® tactic, for example. (ex. https://attack.mitre.org/tactics/TA0002/ ) | keyword |

| threat.technique.id | The id of technique used by this threat. You can use a MITRE ATT&CK® technique, for example. (ex. https://attack.mitre.org/techniques/T1059/) | keyword |

This is the falcon dataset.

Example

{

"@timestamp": "2023-11-02T13:41:34.000Z",

"agent": {

"ephemeral_id": "8f4a039c-66d4-439c-a43f-c5a95f653dd4",

"id": "67072e92-576d-47d8-8a43-ebb347b4250b",

"name": "elastic-agent-93422",

"type": "filebeat",

"version": "8.18.1"

},

"crowdstrike": {

"event": {

"AgentIdString": "fffffffff33333",

"SessionId": "1111-fffff-4bb4-99c1-74c13cfc3e5a"

},

"metadata": {

"customerIDString": "abcabcabc22221",

"eventType": "RemoteResponseSessionStartEvent",

"offset": 1,

"version": "1.0"

}

},

"data_stream": {

"dataset": "crowdstrike.falcon",

"namespace": "99576",

"type": "logs"

},

"ecs": {

"version": "8.17.0"

},

"elastic_agent": {

"id": "67072e92-576d-47d8-8a43-ebb347b4250b",

"snapshot": false,

"version": "8.18.1"

},

"event": {

"action": [

"remote_response_session_start_event"

],

"agent_id_status": "verified",

"category": [

"network",

"session"

],

"created": "2023-11-02T13:41:34.000Z",

"dataset": "crowdstrike.falcon",

"ingested": "2025-05-30T08:29:21Z",

"kind": "event",

"original": "{\"event\":{\"AgentIdString\":\"fffffffff33333\",\"HostnameField\":\"UKCHUDL00206\",\"SessionId\":\"1111-fffff-4bb4-99c1-74c13cfc3e5a\",\"StartTimestamp\":1698932494,\"UserName\":\"admin.rose@example.com\"},\"metadata\":{\"customerIDString\":\"abcabcabc22221\",\"eventCreationTime\":1698932494000,\"eventType\":\"RemoteResponseSessionStartEvent\",\"offset\":1,\"version\":\"1.0\"}}",

"start": "2023-11-02T13:41:34.000Z",

"type": [

"start"

]

},

"host": {

"name": "UKCHUDL00206"

},

"input": {

"type": "streaming"

},

"message": "Remote response session started.",

"observer": {

"product": "Falcon",

"vendor": "Crowdstrike"

},

"related": {

"hosts": [

"UKCHUDL00206"

],

"user": [

"admin.rose",

"admin.rose@example.com"

]

},

"tags": [

"preserve_original_event",

"forwarded",

"crowdstrike-falcon"

],

"user": {

"domain": "example.com",

"email": "admin.rose@example.com",

"name": "admin.rose"

}

}

Exported fields

| Field | Description | Type |

|---|---|---|

| @timestamp | Event timestamp. | date |

| agent.id | Unique identifier of this agent (if one exists). Example: For Beats this would be beat.id. | keyword |

| agent.name | Custom name of the agent. This is a name that can be given to an agent. This can be helpful if for example two Filebeat instances are running on the same host but a human readable separation is needed on which Filebeat instance data is coming from. | keyword |

| agent.type | Type of the agent. The agent type always stays the same and should be given by the agent used. In case of Filebeat the agent would always be Filebeat also if two Filebeat instances are run on the same machine. | keyword |

| agent.version | Version of the agent. | keyword |

| cloud.image.id | Image ID for the cloud instance. | keyword |

| crowdstrike.event.AccountCreationTimeStamp | The timestamp of when the source account was created in Active Directory. | date |

| crowdstrike.event.AccountId | keyword | |

| crowdstrike.event.ActivityId | ID of the activity that triggered the detection. | keyword |

| crowdstrike.event.AddedPrivilege | The difference between their current and previous list of privileges. | keyword |

| crowdstrike.event.AdditionalAccountObjectGuid | Additional involved user object GUID. | keyword |

| crowdstrike.event.AdditionalAccountObjectSid | Additional involved user object SID. | keyword |

| crowdstrike.event.AdditionalAccountUpn | Additional involved user UPN. | keyword |

| crowdstrike.event.AdditionalActivityId | ID of an additional activity related to the detection. | keyword |

| crowdstrike.event.AdditionalEndpointAccountObjectGuid | Additional involved endpoint object GUID. | keyword |

| crowdstrike.event.AdditionalEndpointAccountObjectSid | Additional involved endpoint object SID. | keyword |

| crowdstrike.event.AdditionalEndpointSensorId | Additional involved endpoint agent ID. | keyword |

| crowdstrike.event.AdditionalLocationCountryCode | Additional involved country code. | keyword |

| crowdstrike.event.AdditionalSsoApplicationIdentifier | Additional application identifier. | keyword |

| crowdstrike.event.AgentId | keyword | |

| crowdstrike.event.AgentIdString | keyword | |

| crowdstrike.event.AggregateId | keyword | |

| crowdstrike.event.AnodeIndicators | nested | |

| crowdstrike.event.AnomalousTicketContentClassification | Ticket signature analysis. | keyword |

| crowdstrike.event.AssociatedFile | The file associated with the triggering indicator. | keyword |

| crowdstrike.event.Attributes | JSON objects containing additional information about the event. | flattened |

| crowdstrike.event.AuditKeyValues | Fields that were changed in this event. | nested |

| crowdstrike.event.AuditKeyValues.Key | keyword | |

| crowdstrike.event.AuditKeyValues.ValueString | keyword | |

| crowdstrike.event.Category | IDP incident category. | keyword |

| crowdstrike.event.CertificateTemplateIdentifier | The ID of the certificate template. | keyword |

| crowdstrike.event.CertificateTemplateName | Name of the certificate template. | keyword |

| crowdstrike.event.Certificates | Provides one or more JSON objects which includes related SSL/TLS Certificates. | nested |

| crowdstrike.event.CloudPlatform | keyword | |

| crowdstrike.event.CloudProvider | keyword | |

| crowdstrike.event.CloudService | keyword | |

| crowdstrike.event.Commands | Commands run in a remote session. | keyword |

| crowdstrike.event.CompositeId | Global unique identifier that identifies a unique alert. | keyword |

| crowdstrike.event.ComputerName | Name of the computer where the detection occurred. | keyword |

| crowdstrike.event.ContentPatternCounts | nested | |

| crowdstrike.event.ContentPatterns.ConfidenceLevel | long | |

| crowdstrike.event.ContentPatterns.ID | keyword | |

| crowdstrike.event.ContentPatterns.MatchCount | long | |

| crowdstrike.event.ContentPatterns.Name | keyword | |

| crowdstrike.event.CustomerId | Customer identifier. | keyword |

| crowdstrike.event.DataDomains | Data domains of the event that was the primary indicator or created it. | keyword |

| crowdstrike.event.Description | keyword | |

| crowdstrike.event.Destination | nested | |

| crowdstrike.event.Destination.Channel | keyword | |

| crowdstrike.event.DetectId | Unique ID associated with the detection. | keyword |

| crowdstrike.event.DetectName | Name of the detection. | keyword |

| crowdstrike.event.DetectionType | keyword | |

| crowdstrike.event.DeviceId | Device on which the event occurred. | keyword |

| crowdstrike.event.DnsRequests | Detected DNS requests done by a process. | nested |

| crowdstrike.event.DocumentsAccessed | Detected documents accessed by a process. | nested |

| crowdstrike.event.DomainName | keyword | |

| crowdstrike.event.EgressEventId | keyword | |

| crowdstrike.event.EgressSessionId | keyword | |

| crowdstrike.event.EmailAddresses | Summary list of all associated entity email addresses. | keyword |

| crowdstrike.event.EnvironmentVariables | Provides one or more JSON objects which includes related environment variables. | nested |

| crowdstrike.event.EventTimestamp | date | |

| crowdstrike.event.EventType | CrowdStrike provided event type. | keyword |

| crowdstrike.event.ExecutablesWritten | Detected executables written to disk by a process. | nested |

| crowdstrike.event.ExecutablesWritten.FileName | keyword | |

| crowdstrike.event.ExecutablesWritten.FilePath | keyword | |

| crowdstrike.event.ExecutablesWritten.Timestamp | keyword | |

| crowdstrike.event.ExecutionID | keyword | |

| crowdstrike.event.ExecutionMetadata.ExecutionDuration | long | |

| crowdstrike.event.ExecutionMetadata.ExecutionStart | date | |

| crowdstrike.event.ExecutionMetadata.ReportFileName | keyword | |

| crowdstrike.event.ExecutionMetadata.ResultCount | long | |

| crowdstrike.event.ExecutionMetadata.ResultID | keyword | |

| crowdstrike.event.ExecutionMetadata.SearchWindowEnd | date | |

| crowdstrike.event.ExecutionMetadata.SearchWindowStart | date | |

| crowdstrike.event.FalconHostLink | keyword | |

| crowdstrike.event.FileCategoryCounts | nested | |

| crowdstrike.event.FileName | keyword | |

| crowdstrike.event.FilePath | keyword | |

| crowdstrike.event.FileType.Type.CategoryID | keyword | |

| crowdstrike.event.FileType.Type.CategoryName | keyword | |

| crowdstrike.event.FileType.Type.Description | keyword | |

| crowdstrike.event.FileType.Type.ID | keyword | |

| crowdstrike.event.FileType.Type.Name | keyword | |

| crowdstrike.event.FilesAccessed.FileName | keyword | |

| crowdstrike.event.FilesAccessed.FilePath | keyword | |

| crowdstrike.event.FilesAccessed.Timestamp | date | |

| crowdstrike.event.FilesEgressedCount | long | |

| crowdstrike.event.FilesWritten.FileName | keyword | |

| crowdstrike.event.FilesWritten.FilePath | keyword | |

| crowdstrike.event.FilesWritten.Timestamp | date | |

| crowdstrike.event.Finding | The details of the finding. | keyword |

| crowdstrike.event.FineScore | The highest incident score reached as of the time the event was sent. | float |

| crowdstrike.event.Flags.Audit | CrowdStrike audit flag. | boolean |

| crowdstrike.event.Flags.Log | CrowdStrike log flag. | boolean |

| crowdstrike.event.Flags.Monitor | CrowdStrike monitor flag. | boolean |

| crowdstrike.event.GrandParentCommandLine | keyword | |

| crowdstrike.event.GrandParentImageFileName | keyword | |

| crowdstrike.event.GrandParentImageFilePath | keyword | |

| crowdstrike.event.GrandparentCommandLine | Grandparent process command line arguments. | keyword |

| crowdstrike.event.GrandparentImageFileName | Path to the grandparent process. | keyword |

| crowdstrike.event.GrandparentImageFilePath | keyword | |

| crowdstrike.event.Highlights | Sections of content that matched the monitoring rule. | text |